Meta agents hit the stack: RUNSTACK unveils self-building OS

RUNSTACK introduced a meta agent platform that learns integrations and supervises fleets of task agents. Here is why A2A and MCP matter, how this differs from today’s bot builders, and the signals to watch before you adopt.

What just happened

On November 5, 2025, RUNSTACK announced a meta agent platform that aims to assemble and maintain its own integrations while coordinating fleets of specialized agents. Think of it as an operating system for agents that learns external tools, patches broken links, and supervises the work of subordinate agents. That is a bold claim, and it marks an important shift in the field: from single chatbots to autonomous, cooperative AI organizations. The company’s press release, RUNSTACK announces meta agent systems, lays out the pitch.

In plain language, the news signals a move away from one bot per task. Instead, imagine a small firm made up of software employees. A meta agent acts like the managing partner. It hires specialized agents, teaches them how to use new tools, checks their work, and replaces or retrains them when something breaks. The firm keeps running while tools change around it.

Why this matters now

Most agent deployments stall for two familiar reasons. First, brittle integrations. An endpoint moves, a permission expires, or a new tool appears, and the whole workflow freezes until a human repairs the connection. Second, weak oversight. An agent does not escalate when it is unsure, repeats an error, or misses a dependency in another system. The market needs systems that can learn tools continuously and supervise work like a responsible manager. That is the promise on display.

The big idea: self-building plus oversight

Self-building and structured oversight are the two pillars of the meta agent approach.

Self-building explained

Self-building means the platform can discover an external service, learn how to call it, validate those calls with automated tests, and publish a reusable connector. Picture a junior developer who reads an API manual, writes a client, runs test calls, and then documents the quirks for the rest of the team. The RUNSTACK pitch describes this junior developer as software embedded inside the platform. The effect is faster tool learning and fewer dead ends when an integration drifts.

Concretely, self-building should include these steps:

- Discovery of available endpoints and authentication models.

- Generation of a minimal client and test suite for common paths.

- Automatic probing for rate limits, pagination, and error semantics.

- Field mapping and schema alignment across systems.

- Publishing a connector with versioning, docs, and guards.

When this works, a new tool becomes a teachable task rather than a weeks-long integration project.

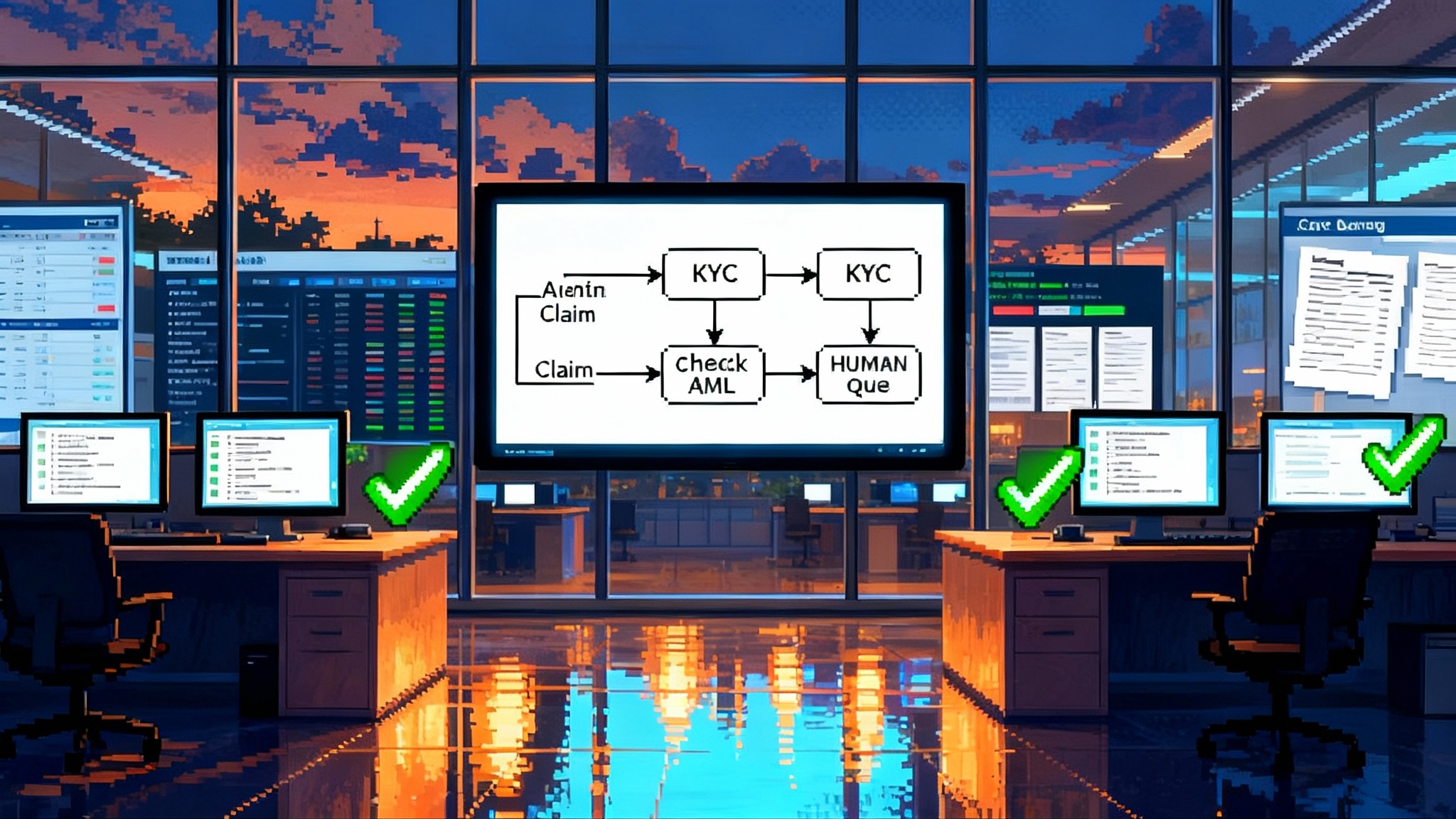

Oversight explained

Oversight is the difference between a lively team and a loose collection of freelancers. A supervising agent assigns work, evaluates outputs, and compares plans across agents. It mediates conflicts, schedules retries, enforces budgets, and turns ambiguous user goals into structured subgoals others can execute. Crucially, it exposes a surface where policy attaches. If you can see every planned action, every call to a tool, and every handoff, you can enforce business logic without hand wiring every path.

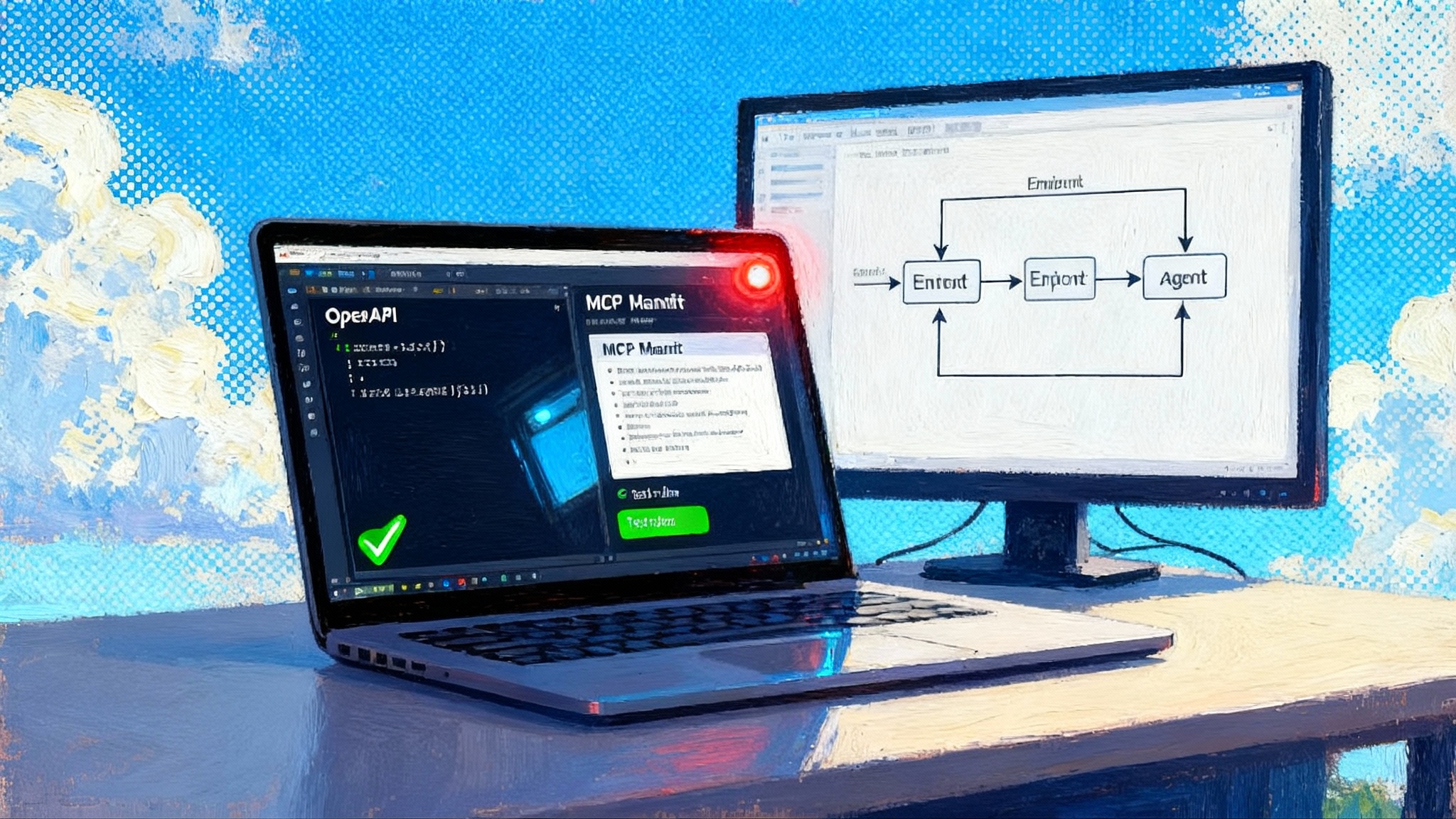

Where standards fit: A2A and MCP

Two standards are converging on this idea of cooperative agents.

- Agent to Agent protocols, often shortened to A2A, define how agents describe themselves, share capabilities, delegate tasks, and return results across organizational boundaries. The Linux Foundation now stewards an open effort, described in Linux Foundation A2A project launch. Interoperability and policy controls travel better when the wire format is stable.

- Model Context Protocol, or MCP, standardizes how models attach tools, data, and live system context. It gives agents a predictable way to reach files, databases, and services through registered servers.

In practice, A2A helps agents talk to each other, while MCP helps each agent talk to the world of tools. Together, they form the plumbing for meta agent oversight. A supervising agent can plan across a graph of workers because discovery and invocation are consistent.

If you want a deeper sense of how testing and governance intersect with cross agent handoffs, revisit our coverage of LambdaTest agent to agent QA arrives, where we explored methods to make agent behavior measurable rather than mysterious.

Startup approach versus enterprise orchestration

RUNSTACK’s approach embodies a clean slate. It frames the platform as an agent operating system that owns its integration learning loop and wraps oversight around everything by default. The startup view looks like this:

- One conversational interface that spins up new agents on demand, then keeps a history of their performance as a memory the manager can query.

- A self-learning connector layer that treats each new API as a teachable task rather than a manual integration project.

- A supervisory agent that audits, throttles, retries, and replaces subordinate agents based on policy and metrics.

Enterprise platforms are moving in the same direction, but from the opposite end. They start with governance and observability and work backward into agent autonomy. In many enterprise tools you see policy gates, identity controls, and audit logs leading the design. The orchestration often sits inside a studio with roles for developers and administrators, with connectors vetted and security baselines aligned to company standards. Autonomy is permitted inside corridors of control.

The open A2A movement will accelerate adoption inside these studios because it offers interop without sacrificing logs, identities, and service level agreements. When a buyer can get portability and policy in the same package, the risk calculus changes.

The practical difference for a builder is speed of iteration. The startup stack attempts to remove the human in the middle of integration work. The enterprise stack tries to guarantee that the human in the loop has the right levers to stop, inspect, and roll back. Both are valid. The choice depends on your tolerance for blast radius and on whether your main bottleneck is compliance or delivery.

How this differs from today’s open-source agent kits

Open-source agent frameworks have taught us a lot. LangGraph and LangChain popularized graph-style orchestration and clear tool calling. AutoGen pushed collaborative agent chat patterns. CrewAI adopted role based teams. LlamaIndex focused on context construction. These projects showed that many tasks benefit from specialization and coordination.

What the meta agent operating system adds is continuous integration learning plus a first class manager. Instead of hand authoring a connector or wiring a specific tool to a node, the system treats tools as a domain to explore. It can propose an integration plan, run probes, generate tests, and publish a connector for others to reuse. It also keeps a ledger of tool behavior and agent outcomes. When a vendor updates a field or rate limits a method, the manager notices and triggers a repair cycle.

For a view of how user interfaces are also shifting, read our analysis of when the browser becomes the agent. The front end is no longer a passive form. It is an actor that can coordinate tools, retrieve context, and enforce policies inline. That trend pairs naturally with meta agents that manage the back end.

And because APIs remain the backbone of tool access, it is worth seeing how developer tooling is evolving. We covered how documentation and client generation are converging in APIs go agent first with Theneo. A self-building layer is more likely to succeed in a world where APIs are consistently described and discoverable.

What self-building looks like in practice

Consider a familiar workflow. A sales agent creates a quote, opens a support ticket for a tricky requirement, and updates a timeline in a project board. In a typical setup a human connects the dots across three applications. An engineer writes connectors, adds a job to a scheduler, and hopes each vendor keeps its endpoints stable.

In a self-building system, the meta agent starts by reading the API specs for the quote tool. It generates a client and test suite, runs a few test requests on a sandbox, and infers rate limits and pagination. It then maps fields between the quote tool and the ticketing system, proposes a schema alignment, and runs an end to end simulation. If something breaks, it captures the failure, rolls back, and retries with a fallback plan. The supervising agent records each step, tags it with cost and latency, and produces a short narrative that a human reviewer can skim.

The same pattern applies to unstructured work. Suppose a research team wants to compare a dozen papers, draft a summary, and file a budget request. A planning agent divides the work into retrieval, reading, synthesis, and paperwork. Tool learning shows up in the retrieval stage. If the document store changes its search interface, the integration layer relearns the method and restores the pipeline without a ticket. Oversight remains present the whole time. The manager approves plans, applies budgets, and escalates uncertain steps to a human or a stronger model.

Oversight that earns trust

A meta agent can make a system safer because it creates a surface for policies. High quality oversight includes these ingredients:

- Plan and act separation. The system should present a plan to a human or a policy engine before it acts on important resources. Plans and diffs become the main review surface.

- Budget controls. Supervisors track cost, time, and risk, and halt work that exceeds thresholds.

- Escalation logic. Uncertain steps get sent to a human or a stronger model. The system should know what it does not know.

- Memory with permissions. Useful memories survive across sessions, but only within the right boundaries. A sales memory should not leak into finance unless a policy says so.

- Repair playbooks. When a tool fails or drifts, the manager runs a known sequence of probes and fallbacks, then logs the fix with context.

If a platform gives you these as defaults, you spend less time inventing safety patterns and more time shipping value.

Risks to watch and how to mitigate them

Self-building is powerful, but there are real hazards. Here are the practical ones and the countermeasures.

- Permission creep. A learning integration may request broad scopes to explore. Restrict discovery to sandboxes and require explicit approvals to promote scopes into production.

- Hidden cost growth. Automated probes and retries add tokens and cycles. Attach cost budgets to every plan and require managers to justify overages with improved reliability.

- Model overconfidence. A manager that generates tests may validate the same false assumption in two places. Keep a separate test oracle with human written assertions for critical paths.

- Data provenance gaps. When agents rewrite data, you need lineage to survive tool changes. Require immutable event logs with correlation identifiers across all agent calls, not just traces inside one framework.

- Vendor drift. A changing external service can trigger a cascade of relearning at bad times. Schedule quiet periods for learning jobs and enforce canary rollouts of new connectors.

Adoption signals that matter

If you want to know when this approach is ready for your team, watch for these signals.

- Private beta quality. Do early customers report that integrations install in minutes and survive an upgrade cycle without tickets. One practical test is whether a connector learned in sandbox still works after a vendor deploys a minor version.

- Standards convergence. Are your suppliers moving to A2A for agent collaboration and to MCP for tool access. Watch for default support in studios and cloud services, not just open repositories.

- Eval maturity. Does the platform let you define task suites with ground truth, and can it run them nightly against rolling connector updates. Look for signal attribution at the tool and agent level, not just an overall score.

- Observability depth. Do you get plan diffs, cost heatmaps, error clusters, and session replays. If you can answer why a plan changed yesterday, the system is becoming operationally safe.

- Policy composition. Can you attach the same budget rule to all agents that touch a system of record. If policies travel across tools without code changes, the platform is maturing.

How to choose: platform versus DIY kits

If you are deciding between a do it yourself open source build and a meta agent platform, frame the choice around three questions.

-

How often will your integrations change. If the answer is weekly, a self-building layer may pay for itself by preventing drift outages and reducing pager fatigue.

-

How sensitive are your workflows. If you touch money, health, or privacy, choose the path with better oversight controls even if raw iteration is slower.

-

Who will own reliability. If your team is small, an operating system that watches the agents can substitute for a dedicated platform squad.

A healthy strategy is a hybrid. Use open source frameworks to prototype and to keep leverage. Use a meta agent platform for the highest churn or highest stakes workflows. Insist on open standards so you can switch layers later.

A note on interoperability and markets

The real unlock is not a single perfect model. It is a manager that learns tools, supervises workers, and proves its work with plans, logs, and tests. A2A and MCP remove much of the glue code that made multi agent projects brittle. That is why standards activity matters. It accelerates a shift from clever proof of concept bots to organizations of agents that can survive change.

We have seen the same story in adjacent domains. Testing moved from ad hoc scripts to structured suites and dashboards. Documentation moved from static PDFs to interactive reference portals. As standards become defaults, new platforms can compete on oversight quality, learning speed, and operating economics rather than on one off integrations.

What to do next

- Inventory your critical workflows. Identify the tasks where integrations fail most often and where a self-building layer would save rework.

- Stand up a small MCP sandbox. Register only non sensitive tools. Measure how quickly new tools can be discovered, validated, and attached to an agent.

- Pilot A2A handoffs. Start with a single handoff between two agents that live in different frameworks. Evaluate how well plans, states, and results travel across the boundary.

- Build a safety harness first. Define budgets, escalation paths, and an independent test oracle. Make it a gate for any new integration.

- Track the signals above. Ask vendors for roadmap dates, private beta access, and examples of repair playbooks that run in production.

The bottom line

RUNSTACK’s announcement is a marker that the market is shifting from clever chatbots toward coordinated AI organizations. The key is not a single powerful model. The key is a manager that learns the tools, watches the workers, and keeps the lights on while everything changes around it. With A2A enabling agents to cooperate across boundaries and MCP giving each agent a stable way to reach tools and data, the foundations are finally solid enough to build on.

If you build software that depends on outside services, this approach offers leverage. You gain a system that keeps learning the shape of your environment and that proves its work with plans, logs, and tests. The risks are real, but they are concrete and manageable with the right guardrails. The winners will be the teams that treat agent fleets like organizations, that invest in oversight before scale, and that pick standards that travel. Those teams will ship faster today, and they will still be shipping on the day the next tool changes the rules again.