NVIDIA Rubin CPX breaks the context wall for AI agents

Rubin CPX targets the hardest part of inference, the context phase, making 100 million token windows and cheaper long-context runs plausible. Here is what it means for agent stacks and a concrete 90 day plan to get ready.

Breaking: Rubin CPX targets the context phase

NVIDIA has introduced Rubin CPX, a new class of processor aimed squarely at the heaviest part of modern inference: the context phase. That is the work a model must do before it generates a single token. It is the time it spends reading prompts, assembling documents, walking codebases, loading key value caches, and building a working memory that enables reasoning. By accelerating this prefill stage, Rubin CPX promises meaningful gains for million scale token windows and the memory rich workloads that agent systems increasingly require. You can see the positioning in the official announcement where NVIDIA unveils Rubin CPX.

At rack scale, Rubin CPX is paired with the broader Rubin generation to create multi rack systems with vast on tap memory and high aggregate throughput. NVIDIA highlights a platform that targets multi document, multi session workloads where keeping large context resident is the difference between smooth reasoning and stalls. In the same vein, partners cited by NVIDIA describe one hundred million token windows that can keep entire codebases and years of interaction history in memory. Those claims are reiterated in the Rubin CPX press release, which positions CPX as a compute optimized prefill engine within a disaggregated inference pipeline.

Why long context is hard

If generation is a sprint, context is the uphill climb that comes first. The difficulty shows up in two places:

-

Attention compute. Standard attention grows expensive as sequence length increases. Longer windows demand many more operations per token, which compounds quickly as you approach million token scales.

-

Memory bandwidth and layout. Moving and reorganizing enormous key value caches stresses high bandwidth memory. Complex layouts and cache misses burn cycles and power, adding latency and cost before any decoding begins.

Rubin CPX attacks both problems by tuning for prefill. It is designed to push attention heavy workloads through fast, and to pair that with a memory configuration that favors context building rather than extended decode. In plain language, CPX acts like a dedicated cargo loader for your model. It gets everything staged so that the generation engines can take off without delay.

What CPX changes in the pipeline

Most serving stacks grew up scaling decode first. Teams added more general purpose accelerators and hoped prefill would not dominate. That worked until window sizes and memory needs exploded. CPX formalizes a split pipeline:

- Context phase on CPX. Fast prefill, aggressive attention throughput, and memory access tuned for reading large source sets and assembling KV caches.

- Generation phase on standard Rubin parts. High capacity memory and throughput optimized for token by token decode across text, code, or multimodal outputs.

This disaggregated approach mirrors classic data platforms that separate ingest from query. You do not run extract transform load on the same nodes that handle your most latency sensitive reads. With CPX, that separation becomes first class for inference. The immediate benefit is operational control. You can plan clusters with distinct pools, queue context heavy requests to CPX nodes, then hand off to decode pools. Scheduling becomes simpler, head of line blocking from a few very long contexts is reduced, and capacity planning gets clearer levers.

Numbers that matter for agent builders

The exact figures will vary by configuration, but the headline ideas are consistent with what NVIDIA has shared:

- Attention throughput. Claims of roughly three times faster attention versus previous generation Rubin era systems aim directly at prefill latency. That means shorter time to first token for long windows, and higher effective throughput for context rich jobs.

- Memory on tap. Single rack platforms are described with on the order of one hundred terabytes of fast memory and very high aggregate bandwidth. That can keep far more context resident and reduce costly swapping.

- Context scale. Partners validating windows near one hundred million tokens point to a new regime where codebases, product histories, and long lived sessions can be held in full rather than constantly summarized.

These are not benchmark curiosities. They shift how you design agent memory. Instead of compressing history into ever smaller summaries, you can place more first party truth inside the window. That includes raw logs, signed documents, and versioned code snapshots that your model can cite directly.

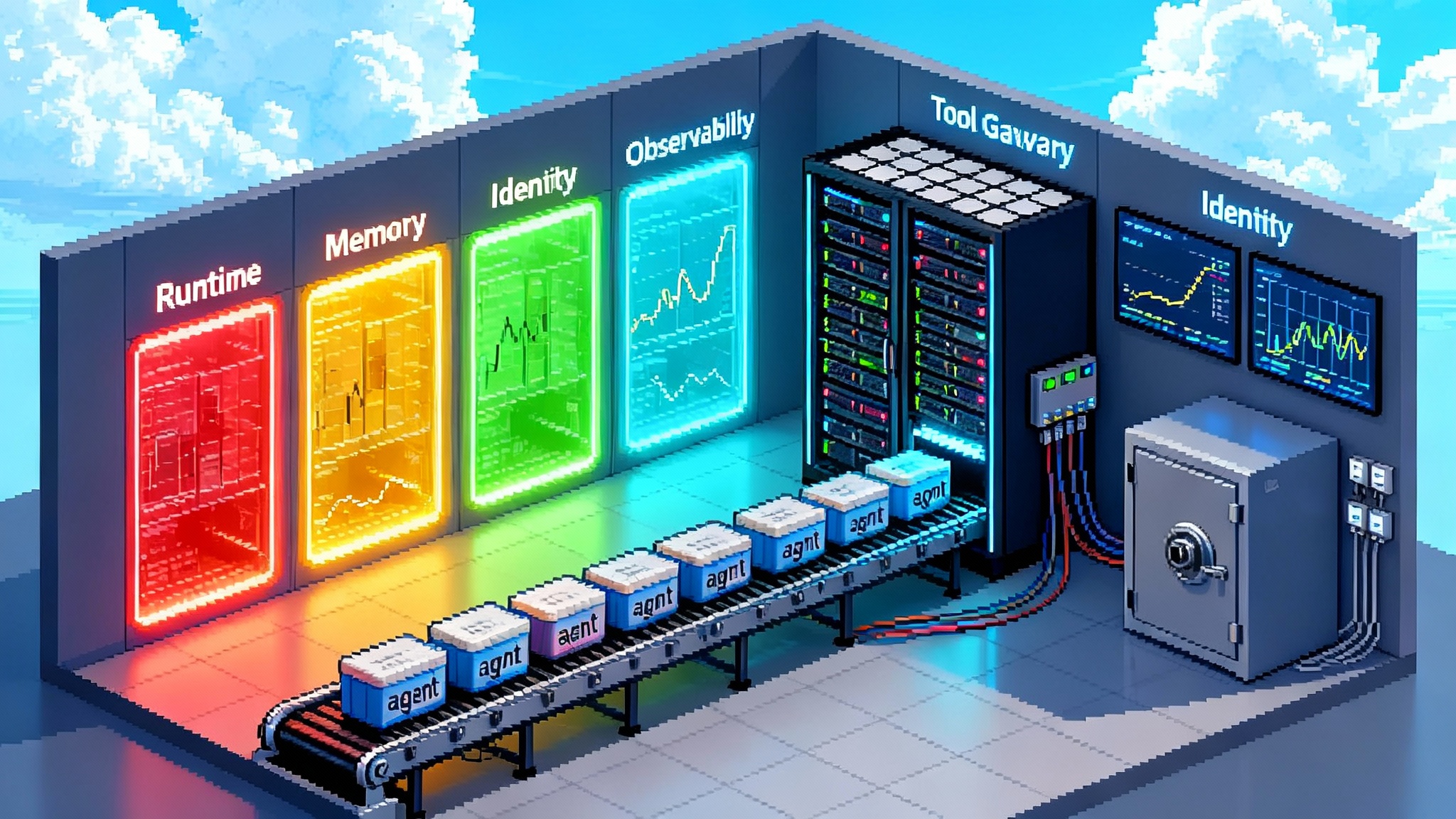

How CPX fits NVIDIA’s broader agent stack

Hardware is only half the story. NVIDIA has been assembling a software stack that sits above CPX and turns long context feasibility into production patterns. The company has discussed reference blueprints for connecting enterprise knowledge to agents that perceive, reason, and act. It has packaged inference services that simplify deployment across on premises and cloud environments, and it has continued to invest in retrieval, memory, and evaluation tooling.

Just as importantly, the wider ecosystem is maturing. Teams shipping agent runtimes today have learned that success at scale requires strong contracts, observability, and safe tool use. If you are mapping CPX into your plans, study how production frameworks approach orchestration. For example, look at how organizations use OpenAI AgentKit in production to standardize tool schemas and execution, or how browser based runtimes are evolving in the Atlas Agent Mode overview. On the operations side, examine the patterns in AWS AgentCore enterprise runtime that treat agents as long lived services with clear budgets and guardrails.

What this unlocks for autonomous agents

- Persistent memory. When a session’s full history and a user’s working set can live inside the window, the agent behaves consistently across days and projects. Hand offs between tasks do not lose nuance. You avoid the compounding loss from summary of a summary.

- Multi document reasoning. Agents can read a shelf of manuals, reconcile them with incident tickets across years, and track version drift without brittle chunking rules. The model can cite primary sources because they are actually in context.

- Tool using workflows. With structured logs and durable context, the agent can call tools, reflect on results, and maintain a clean state machine over long horizons. That reduces flakes and makes outcomes more auditable.

The result is not just better single answers. It is steadier autonomy over entire workflows because the agent can re inspect what it read, repeat steps deterministically, and evolve plans without losing the thread.

A 90 day plan to prepare your stack

You do not need CPX hardware on day one to get ready. Use the next three months to reshape data, evaluation, and operations so you can exploit long context as soon as it is available.

Days 1 to 30: build the corpus and the baselines

Data

- Curate first party sources that benefit from full text inclusion. Prioritize regulated documents, engineering runbooks, policy manuals, architecture decision records, and past incident tickets.

- Freeze weekly snapshots of critical code repositories and high value wikis. Treat each snapshot as a single source of truth object with version tags and owners.

- Capture session logs for your most important workflows. Preserve raw context and tool traces, not only final answers, and store them with retention rules.

Evaluation

- Create a long context suite with tasks at 1 million, 10 million, and 50 million tokens. Include multi hop retrieval, cross version code search, and policy compliance checks.

- Track three core metrics: prefill latency at the ninety fifth percentile, answer quality on retrieval grounded tasks, and cost per million prefill tokens.

- Add stress tests for drift and contradiction. The agent should detect and reconcile inconsistent documents rather than cherry pick the convenient one.

Operations

- Split serving into two logical pools now, even without CPX. Treat one pool as context heavy and the other as decode heavy. This primes schedulers, autoscalers, and observability for the split world.

- Instrument context build time separately from decode time. You will need this split metric to decide when to route work to CPX nodes later.

- Add a cache of prepared context. Pre compute chunking, embeddings, and KV caches for hot datasets and pin them with clear retention policies.

Decision gates

- Define quality bars for each task class. For example, accept a two times slower response at 50 million tokens if the answer quality improves by a fixed number of points on your domain rubric.

- Set budget caps for per conversation spend. Long context can blow up costs without ceilings and back off strategies.

Days 31 to 60: harden memory, tooling, and observability

Data and memory

- Move from naive chunking to structure aware packaging. Store tables, code files, and scanned PDFs with explicit boundaries and metadata for fast inclusion.

- Define a memory schema for agents. Separate ephemeral working notes from durable project memory, and map both to retrievers and window assembly rules.

- Build lineage tracking. Every context segment should carry source, version, and permission. Make it easy to see exactly why a paragraph landed in the window.

Tooling

- Introduce a function registry with typed signatures and explicit side effects. Agents should call tools with self describing contracts and idempotent behavior.

- Add guardrails that work over long horizons. Rate limit high risk tools across a session, not only per call, and audit state transitions.

Observability

- Implement heatmaps of token contribution. Show which sources dominate the window and how that shifts across steps.

- Create alerts for runaway prefill. If an agent is about to assemble a 60 million token window for a low value task, stop and ask the user to confirm.

Evaluation

- Extend your suite with cross document contradiction detection and red team prompts that search for hallucinated merges between similar but mismatched versions.

- Add human review loops on a stratified sample of long context tasks so improvements are statistically real, not anecdotes.

Days 61 to 90: emulate CPX and validate the split pipeline

Emulation and cost modeling

- Emulate CPX by isolating context work on a subset of machines and limiting generation on those nodes. Even if you cannot match CPX speeds, you can validate scheduling and budget models.

- Build a per stage cost calculator. Attribute dollars to prefill and to decode. Decide routing policies based on time or cost savings by task category.

Routing and service design

- Implement a two stage router. Stage one assembles and verifies context. Stage two carries out generation. Pass a signed manifest that lists sources and permissions along with the request.

- Add a fast path for small contexts and a slower path for giant windows. Many tasks do not need the expensive route.

Security and compliance

- Enforce permission checks at context assembly, not only at retrieval. Exclude a document before tokens are counted.

- Add privacy filters tailored for long windows. Scan for personal data and secrets across the entire assembled context, not only per document.

Go or no go criteria

- Define business triggers that merit long windows. For example, always use the long route for severity one incidents, legal discovery, or customer renewals above a threshold.

- Set a hard cap on context size until your own evals prove incremental value beyond a threshold.

A worked example: a memory rich support engineer

Imagine a support agent responsible for a complex enterprise application. The agent pulls in the current incident ticket, the last two years of logs for similar incidents, the five most recent releases with their diffs, runbooks for affected services, and the on call calendar. Today this is a fragile dance of retrieval and summarization. With a very long window, you assemble the raw, versioned sources in context and let the model cross reference directly.

Rubin CPX accelerates the heavy context stage so that response times remain predictable even when the working set is vast. Standard Rubin parts then decode a plan, propose fixes, and draft updates for human review. The agent closes the incident with a signed manifest that lists what it read and what it changed. When a related issue reappears, the entire episode is available in context without expensive recomputation.

Operationally, the pipeline looks like this:

- The router classifies the request as context heavy and sends prefill to CPX nodes.

- The context assembler streams in logs, code snapshots, and runbooks, tagging each segment with version and permission data.

- Observability shows a token contribution chart that highlights which sources dominated the window.

- A policy check confirms the request meets the business trigger for long context.

- The signed manifest and prepared KV caches are handed to the decode pool for final reasoning and generation.

The outcome is not just a faster answer. It is a repeatable playbook that scales to the next incident with fewer flakes and better evidence.

What to watch before general availability

- Maturity of the disaggregated pipeline. Expect serving frameworks to grow first class support for context and generation pools, plus new scheduling policies and autoscaling strategies that respect per stage SLAs.

- Model behavior at extreme lengths. Quality can degrade with very long context. Track updates to positional encodings, attention variants, and memory management that keep reasoning stable at scale.

- Availability and timelines. NVIDIA has indicated that Rubin CPX targets late 2026 availability. Plan to emulate and run phased pilots while you wait for production hardware.

The bottom line

The last two years were dominated by bigger models. The next two are about useful memory. Rubin CPX attacks the context wall by making the prefill stage faster and more affordable, then pairing that hardware with a broader stack that turns long windows into production wins. You do not need to wait for new silicon to gain leverage. If you reshape your data, invest in long context evaluation, and split your serving pipeline now, you can ship agents in 2026 that think across entire projects rather than isolated prompts. That is the shift that matters for businesses. Durable memory, steady reasoning, and a price you can plan for.