The Memory Layer Moment: Mem0’s rise and what comes next

Mem0's October funding made persistent memory for agents feel like infrastructure. This article breaks down what a memory layer does, why MCP toolchains and agent clouds changed the game, and how to ship it safely.

This week, memory became infrastructure

On October 28, 2025, Mem0 announced a 24 million dollar round to become the default memory layer for AI agents. The company positions itself as a vendor agnostic fabric that travels with the user across models and apps. If you missed the news, see the company announcement at Mem0 raised 24 million for investors, roadmap, and positioning.

The funding landed in a market that already shifted. Toolchains that speak the Model Context Protocol and agent clouds that keep stateful sessions alive have turned persistent memory from a novelty into a requirement. If you plan to win in 2026, design for memory portability, governance, and evaluation now. Teams that do will ship faster, avoid rewrites, and reduce lock in risks that become painful at scale.

Along the way, this piece connects memory to adjacent changes covered on this blog, including how agents live in the browser in when the browser becomes the agent and why testability matters in agent to agent QA arrives.

What a memory layer actually does

Forget the fuzzy phrase AI memory. In production, a memory layer is not a vibe. It is a set of concrete services and policies that transform raw interaction data into durable, governed knowledge.

- Capture: extract stable facts, preferences, skills, and constraints from interactions, documents, and tools. For example, prefers 30 minute meetings, owns a Peloton Bike Plus, or uses Snowflake for analytics.

- Normalize: store items as typed objects with provenance, timestamps, and confidence scores. Keep the original evidence alongside the derived memory, not instead of it.

- Retrieve: apply task aware filters such as time decay, recency, salience, and scope. A support agent should not pull a personal fitness note. A wellness coach should.

- Evolve: merge duplicates, resolve conflicts, and retire stale items via policies. A shipping address that changes should not leave behind contradictory records.

- Govern: enforce consent, retention, audit trails, and per actor scopes. Memory must be legible to users and administrators, not just to a model.

Under the hood, most teams converge on a hybrid architecture. Use a transactional store for canonical memory objects, a vector index for fuzzy lookup, and a summarization service that builds compact task contexts. The trick is not the database. It is the contract. What is a memory object. Who can write to it. How long does it live. How is it measured.

Why this moment is different

Three catalysts converged in 2025.

-

Protocol first toolchains. Since the spring, major providers have treated the Model Context Protocol as a first class way to connect models to tools and data. OpenAI added remote MCP server support to its Responses API on May 21, 2025, and integrated MCP into its Agents and Apps SDKs. That move decouples tool choice from model choice. Your calendar connector, code interpreter, and file search can be the same across multiple models and runtimes.

-

Agent clouds with long sessions. Providers now ship managed runtimes that keep stateful agents alive long enough to use memory. AWS’s new platform is explicit about this. See the AWS Bedrock AgentCore details, which highlight persistent memory features, complete session isolation, long running workloads, and native observability. E2B, meanwhile, gives agents secure micro virtual machines and desktop sandboxes they can manipulate, so a memory layer can track files, code, and tools the agent touched during a session.

-

Economics. Long context windows are useful, but sending 100 thousand tokens of history on every turn burns money and time. A compact memory object that says prefers markdown in emails costs pennies to store and milliseconds to retrieve. Teams that shift from transcript replay to memory objects report lower latency, more predictable bills, and fewer model regressions when providers change tokenization or pricing.

If you connect these catalysts to what we saw when APIs moved to agent first patterns in [APIs go agent first-theneos-cursor-for-your-api], a simple picture emerges. Memory is no longer a feature. It is substrate.

The 2026 winners will design for three things

1) Memory portability by default

Portability begins with a clean schema. Treat memory as first class records, not ad hoc strings in prompts. At minimum, store:

- id, type, actor, scope, and subject

- value and value_schema_version

- provenance_evidence with message ids or file hashes

- confidence, salience, and decay_policy

- created_at, updated_at, ttl, and deletion_reason

Make this schema independent of any single vendor. Accept embeddings from multiple providers. Keep derived fields, like summaries or embeddings, separate from the source memory. That way you can reindex or resummarize without rewriting history.

Implementation advice:

- Use a relational store for the canonical record. Many teams choose Postgres for transactionality and add pgvector or an external vector service for recall.

- Expose reading and writing through your own MCP server with a stable contract. Your agents call your server, and your server talks to Mem0 or homegrown stores behind the scenes. If you ever switch vendors, the agent contract does not change.

- Maintain an append only event log for writes and merges, then materialize the current view. This provides auditability and makes backfills and migrations tractable.

2) Memory governance as a product surface

Treat memory like customer data, because it is. Users will forgive a slow answer more easily than an answer that leaks private information or retains something it should not. Make governance visible.

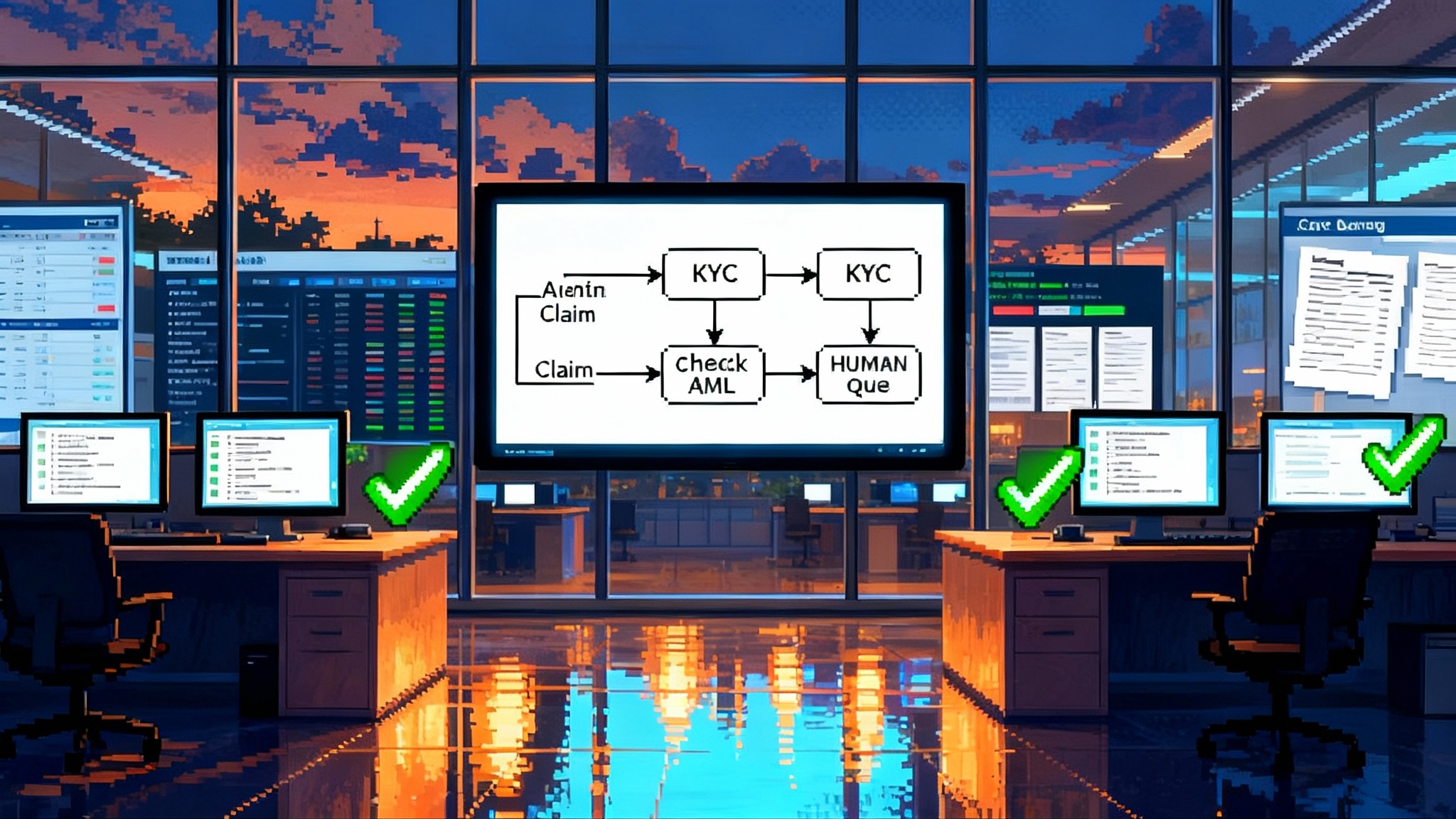

- Consent and scope: explicitly separate personal memory, team memory, and public knowledge. A sales copilot should not mix a representative’s personal notes with team wide product facts without consent.

- Retention and deletion: define time based rules by type. Keep personal preferences for one year since last touch, keep addresses for 90 days after a shipping event, purge failed extractions immediately.

- Redaction and minimization: strip secrets and sensitive fields at capture time. Keep the minimum needed value. For instance, store uses Google Workspace instead of raw email addresses unless required.

- Access controls and keys: tie memory scope to identity providers. Keys should never live in prompts. Rotate on schedule and on incident.

- Audit trails: log who or what wrote, updated, or read a memory, and surface it to the user when they ask why an agent knows something.

3) Memory evaluation, not just answer evaluation

You cannot manage what you do not measure. Add a memory test harness to your continuous integration now. Include:

- Recall rate: given a set of seeded facts, how often does the agent retrieve the right ones for a task.

- Precision: how often does a retrieved memory actually help the task. Irrelevant memories should be penalized.

- Forgetting: after a change, how quickly does the system stop using the old value.

- Contamination: how often do transient context snippets get incorrectly promoted into durable memory.

- Drift: how stable are memories when underlying extraction or summarization models change.

Instrument these metrics per agent, per task, and per memory type. Set clear service level objectives, for example 95 percent correct recall on seeded customer preferences in checkout tasks.

A build now checklist for teams shipping agentic apps

- Define a memory object schema and register it in code. Ship the first version with clear type names and a version field.

- Stand up a canonical store. Postgres with a memory_objects table and a memory_events log is a strong baseline.

- Add a vector index for recall. Start with a small dimensionality and measure. Reindexing should be a background job, not a rewrite.

- Create an MCP server for your memory API. Implement read_by_scope, write_with_provenance, update_with_conflict_resolution, and search_by_semantics.

- Build a redaction pipeline for capture. Use pattern rules for keys, tokens, and secrets. Fallback to a small classification model for edge cases.

- Ship a Memory Inspector. A simple internal tool that lists what the agent believes, why it believes it, and who wrote it will save your on call.

- Add a Memory Eval job to continuous integration. Seed a repository of synthetic user sessions and expected memory states. Run nightly.

- Decide retention now. Put numbers in a policy document and implement them as code.

- Budget tokens for memory. Cap per turn memory injection and drop in a reranker to keep prompts slim.

- Prepare an export path. JSON Lines with your schema. Prove it works by restoring into a test environment.

Vendor lock in risk map

Use this map to spot traps early. For each layer, assess risk, ask for specific guarantees, and keep a mitigation on hand.

-

Storage format

- Risk: proprietary memory objects that cannot be exported with evidence.

- Ask: JSON export of canonical records with provenance and version history at any time.

- Mitigation: maintain your own canonical store, use the vendor for indexing and retrieval only.

-

Indexing and embeddings

- Risk: tight coupling to a single embedding model or vector store.

- Ask: reindexing support and a documented mapping between embedding versions.

- Mitigation: keep embeddings in a separate table keyed by memory id and model name. Allow multiple embeddings per record.

-

Agent runtime

- Risk: memory coupled to a specific orchestrator or desktop sandbox.

- Ask: MCP endpoints that are portable. Session export that includes file artifacts and action traces.

- Mitigation: route memory calls through your MCP server. Store session artifacts in your own bucket.

-

Model provider

- Risk: prompting patterns that require vendor specific tools to retrieve memory.

- Ask: confirmation that retrieval works with standard tool calls and plain text prompts.

- Mitigation: test across at least two models monthly. Keep prompts free of provider specific tokens.

-

Identity and keys

- Risk: vendor stores user identity internally with no external mapping.

- Ask: support for enterprise identity providers and external subject ids.

- Mitigation: keep your own subject id namespace and map vendor ids at the edge.

-

Compliance and audit

- Risk: opaque logs, no deletion proofs.

- Ask: signed deletion attestations and queryable access logs.

- Mitigation: mirror write events to your log. Verify deletions by running scheduled exports and diffs.

-

Pricing shape

- Risk: costs scale with raw context tokens instead of memory objects.

- Ask: unit pricing that maps to memory records or retrieval calls, not prompt length.

- Mitigation: cap injected memory size. Cache summaries. Measure every turn.

A reference architecture that scales

- Sources: chat transcripts, tool outputs, files, calendar events, code diffs.

- Capture: a streaming extractor that emits candidate memories with evidence.

- Canonical store: Postgres tables for memory_objects and memory_events.

- Index: vector store keyed by memory id with per model embeddings.

- MCP server: an internal service that exposes read and write contracts to agents.

- Retrieval: a task aware pipeline that filters by scope, salience, recency, and policy.

- Summarization: background jobs that build compact context packets for each task type.

- Governance: a policy engine connected to identity providers and data loss prevention rules.

- Observability: traces that attach memory reads and writes to each agent decision.

This design lets you start small and scale. If Mem0 is your memory layer, your MCP server can wrap its SDK for reads and writes, while your canonical store mirrors the facts you cannot afford to lose. If you later swap providers, your agents keep calling the same MCP methods.

Field notes from early adopters

- Customer support copilots: replacing transcript replay with memory objects cut median response time by double digits and reduced hallucinated follow ups, since the agent stopped guessing user preferences from long, noisy threads.

- Sales workflows: moving product facts to team scoped memory and personal notes to rep scoped memory prevented embarrassing cross account leaks when a representative switched territories.

- Coding agents: combining a desktop sandbox with per project memory simplified multi day refactors. The memory layer tracked files touched, decisions made, and pending todos, which the agent used to pick up work the next morning.

The consistent pattern is that durable memory lowers latency and raises trust. The consistent failure mode is over promotion of transient context into durable memory. Solve that by requiring provenance and by testing forgetting explicitly.

Frequently asked questions teams are asking now

- Do we need a memory layer if our context window is huge? Yes. Large windows are great for short sessions, but they are expensive and brittle for long lived work. Memory turns repeated context into compact, governed, and auditable objects.

- Should we build or buy? Treat build vs buy as a contract decision. If you can define a portable schema, an MCP server, and export guarantees, you can start with a vendor and maintain strategic control.

- How many memory types should we start with? Five to ten types are enough for most teams to cover preferences, identifiers, assets, skills, and constraints. Expand only when the Memory Inspector reveals repeated gaps.

- What about safety? Redaction, scope boundaries, and audit trails are your first line of defense. A memory layer that cannot show its work will fail security reviews and user trust.

A 90 day rollout plan

- Days 1 to 15: define the schema, pick a canonical store, and wire an MCP server that implements read, write, update, and search. Ship a very small Memory Inspector.

- Days 16 to 45: instrument one agent end to end. Seed five memory types. Add a nightly Memory Eval job with recall, forgetting, and contamination tests.

- Days 46 to 75: integrate governance. Add consent screens, scopes, and retention. Turn on redaction in capture. Start logging reads and writes with user visible reasons.

- Days 76 to 90: harden portability. Prove export and restore. Test a model swap and a vector index swap without changing agent prompts. Document the vendor lock in risk map and mitigation for your stakeholders.

The bottom line

Mem0’s funding is not just good news for one company. It validates a shift that was already underway. Protocols like MCP make tools portable. Agent clouds like AgentCore and E2B make long sessions routine. Memory is no longer a prompt trick. It is the substrate your agents stand on.

The teams that win in 2026 will design memory as a product surface, with portability, governance, and evaluation baked in from day one. They will budget tokens for memory, measure what matters, and demand export guarantees from vendors. They will also keep users in the loop with a Memory Inspector that shows what the agent believes and why.

If you are building a browser native agent or moving an API to an agent first model, revisit the patterns we explored in when the browser becomes the agent and APIs go agent first. Then put a memory layer under them. The best agent experiences in 2026 will feel fast, accurate, and trustworthy not because they remember everything, but because they remember the right things and can prove it.