From Tokens to Torque: Humanoids Clock In at Factories

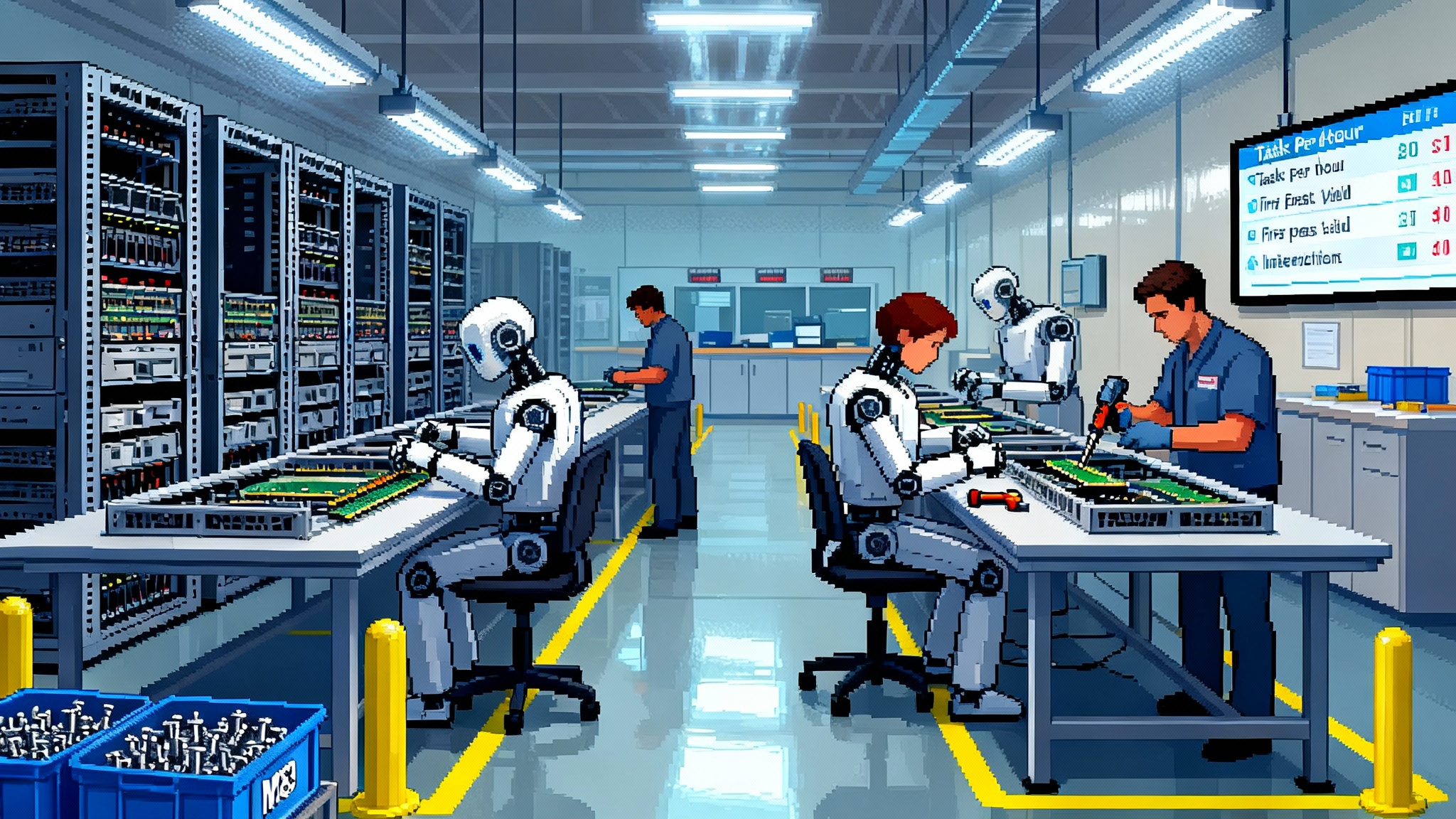

Foxconn is adding humanoid robots to its Houston AI-server lines, running NVIDIA’s GR00T N models on Newton and Jetson Thor. The scoreboard shifts from tokens to tasks per hour, and alignment becomes rigorous contact moderation.

The day software grew hands

On October 29, 2025, Foxconn said it will put humanoid robots to work on the lines at its Houston plant that builds AI servers. That might sound like a futurist rerun, but the details matter. These robots are trained on NVIDIA’s Isaac GR00T N models and are slated to perform specific, repetitive steps in server assembly. It is the kind of announcement that nudges a trend into a milestone. Screens are no longer the boundary of artificial intelligence. The unit of progress is shifting from tokens per second to tasks per hour. For context, see Reuters on Foxconn to deploy humanoid robots.

Factory managers have tested robots for decades. What is different now is that language-trained intelligence is beginning to control motion at commercial speed and cost, with a stack designed for learning physical skills, not only parsing text. Picture it as a compiler for movement. Skills arrive as recipes, demonstrations, and simulations. They are optimized into torque limits, joint trajectories, and grasp strategies that can run on a production line.

The new metric: tasks per hour

In virtual AI, we watch tokens per second and latency. In physical AI, the scoreboard moves to tasks per hour, first pass yield, and mean time to recovery when a robot gets stuck. A task is not a slogan. It is one labeled, auditable unit of work, such as pick heat sink, apply thermal paste, or tighten four M3 screws to 0.5 newton meters.

This change is more than semantics. Once a factory frames progress as tasks per hour, the product and operations questions become crisp:

- What is the retry rate when a screw misthreads?

- How often does a human need to intervene per thousand tasks?

- How long does it take a robot to replan a grasp when a part is skewed two millimeters?

- What is the distribution of cycle times, not only the average?

- How many protective stops occur per shift, and why?

These questions are levers that turn machine intelligence into throughput and margin. They also create a shared scoreboard for engineers, operators, safety leads, and finance.

What changed under the hood

Three technical shifts converged in 2025.

- A generalist control model for humanoids moved from keynote slide to developer workstation. GR00T N1 and its follow-ons encode reusable skills so a robot can parse a natural language instruction, recall a skill graph of motion primitives, and execute with closed-loop perception.

- A physics engine purpose-built for robot learning matured and became available to practitioners. Newton provides the numerical backbone to teach robots in simulation and transfer those skills with fewer surprises on the floor.

- On-robot compute finally met the model. Jetson Thor class modules let a robot run multiple perception and policy networks at real-time rates, so it can see, reason, and act without shipping every frame to a data center.

NVIDIA’s fall updates made this stack usable at scale, including Newton’s release in Isaac Lab and the GR00T N1.6 update that tightened the loop between simulated learning and on-robot inference. See NVIDIA’s summary on NVIDIA releases Newton and GR00T N1.6.

Together, these look like small tweaks. In combination, they change the curve. Simulation produces skills. The model stitches them into plans. The hardware executes those plans while sensing contact, slip, and force in real time.

Factories become cognitive shops

Factories used to be mechanical theaters. Now they are becoming cognitive shops. You do not just install a machine. You install skills, then you refactor them.

Imagine the line as a codebase. Parts bins are libraries. Skill policies are functions. The work plan is a directed acyclic graph that composes those functions with timing, sensing, and error handling. Over time you build a private package manager of skills: screw size families, cable routing, pallet picks, button pushes, panel insertions. You tag each skill with context: lighting, surface friction, allowable contact forces, and sensor requirements. Then you compile for a specific cell and robot body.

The payoff is reuse. When a customer adds a new server variant with a different memory layout, you do not start from scratch. You adjust the skill graph, retrain a few edge cases in simulation, and push an update to the same humanoids overnight. This is why the cognitive shop mental model matters. The value is less in one robot and more in the growing skill library, the tests that guard it, and the data that hardens it. If you want a parallel on the software side, see how agents learn to click once the UI becomes programmable. The factory is following a similar arc, only with torque and contact instead of clicks.

Skills compile into motion

People often ask whether general purpose humanoids are necessary. Many factory tasks can be done with fixed arms or mobile manipulators. The software lens clarifies the tradeoff. A humanoid is a complex runtime that can load more skill libraries without retooling the cell. In a product family with frequent change, that flexibility is worth the extra joints.

Consider a simple example: installing memory and cooling on a motherboard. The skill graph might include locating slots, orienting a memory stick, applying even pressure, waiting for the audible click, then placing a heat spreader and tightening two screws in a star pattern. The same subgraph shows up in different products. Once your sim-to-real pipeline proves that graph for one robot morphology, you can recompile it for another body plan. The code carries more value than the hardware.

Alignment becomes contact moderation

Alignment in screen-based AI is about language harms and content filters. Alignment in physical AI is about contact. Who gets hurt, who pays, and how you prevent incidents. Factories already run on safety culture, but humanoids introduce new edge cases where general purpose motion meets messy reality.

Three principles help teams steer this transition.

-

Shift from guardrails to budgets. Instead of only fencing off a robot, assign a contact budget per task. For example, the robot may not exceed a specified contact force when guiding a cable past a sharp edge or when operating near a human coworker. The budget lives in the skill policy and is enforced by torque sensing, force limits, and speed and separation monitoring.

-

Make liability legible. For each task, write a safety case that ties the skill to its hazards, the mitigations, the tests that validate those mitigations, and the logs that prove they ran. This is the evolution from content moderation to contact moderation. It is paperwork, but it is also product.

-

Treat the insurer as a design partner. Carriers will ask for evidence that your simulation tests map to physical risk reduction. Build the dashboards early. Show near misses, slowdowns, and protective stops per thousand tasks. When you can prove that a software update reduced elbow-to-human proximity events by half, your premiums will tell the story.

If you have been following the rise of verifiable software practices in AI, you will recognize the rhyme. In digital systems, attested AI becomes default. In physical systems, attestation looks like traceable safety cases and tamper-evident logs. The mechanisms differ, but the principle is shared: prove behavior, not just intent.

The sim-to-real moat

The strongest advantage in physical AI is not a single algorithm. It is the culture and tooling that keeps simulation in sync with reality. A credible sim-to-real loop has six layers:

- Asset fidelity. Every bolt hole, connector tolerance, and fastener location is captured. Do not skimp on the parts that feel boring. Those are the ones that cause downtime.

- Sensor realism. Cameras, depth sensors, and force-torque readings are modeled with noise and latency. Lighting changes, lens smudges, and glare are built into datasets.

- Domain randomization. You vary pose, friction, and clutter so the policy learns invariants. When the real world shifts, your model treats it as a draw from a familiar distribution.

- Policy evaluation. You run thousands of episodes with seeded randomness and log failure modes with standardized tags such as misgrasp, slip, occlusion, misalignment, and part absent. The tags are the breadcrumbs that drive fixes.

- Handover choreography. You design exact protocols for handovers between robots and humans. Timing and signals are treated as first class, not afterthoughts.

- Telemetry and backprop. You stream real incidents from the floor back into simulation, then prioritize new training runs by business impact. Failures that consume minutes of operator time go to the front of the queue.

Companies that build this loop well accumulate a moat that looks mundane from the outside. It is test sets, labeled failures, calibration routines, and a habit of writing postmortems. That habit, not the robot’s smile, is what wins uptime wars.

A 12-month field guide for operators

If you run a plant, here is a pragmatic playbook that starts small and compounds.

- Choose two or three tasks with stable parts and modest variance. Aim for operations with subminute cycle times and clear success criteria such as screw insertion, connector mating, or tray loading.

- Instrument before you automate. Add cameras, torque sensors, and machine data collection that can timestamp events at millisecond resolution. You cannot improve what you cannot see.

- Build the digital twin. Import exact geometry for your cell and parts. Create a canonical test scene that every new skill must pass before it goes near a line.

- Create a skill registry. Write a one page spec per skill: inputs, outputs, hazards, contact budgets, and recovery behavior. Version everything.

- Hire for sim and safety. You need an Isaac Lab expert, a perception engineer, and a safety lead who has shipped collaborative cells. If you pick one, pick safety.

- Define the intervention protocol. Decide who gets paged when a robot pauses and how they resolve it. Log cause codes with a short drop-down so you can trend them later.

- Pilot, then productize. Run the first month as a supervised pilot with daily failure reviews. In month two, freeze the policy unless the failure rate breaches a threshold. This forces you to solve root causes, not chase hunches.

- Close the insurer loop. Share your safety case, near miss rates, and change management process. Ask what additional evidence would lower premiums.

- Tackle the next three tasks. Reuse as much of the skill graph as possible. Measure what percent of code and tests were reused. Celebrate reuse, not novelty.

- Staff the care team. Robots need care and feeding. Establish weekly calibration, lens cleaning, and torque sensor checks. Maintenance is a product feature.

The scorecard everyone should share

Moving to tasks per hour does not mean abandoning human-centered metrics. It means unifying them. Four numbers tell the story across operations, engineering, and finance:

- Tasks per hour. The conscious replacement for tokens per second. Report by task family, not only by robot.

- First pass yield. The percent of tasks that complete without human touch. This is what reduces labor hours and variable cost.

- Interventions per thousand tasks. A measure of how often robots demand attention. Aim to cut this in half each quarter on the first two lines.

- Mean time to recovery. The average time from pause to resume. It tells you whether your recovery behavior is crisp or confused.

Put these numbers on a single monitor at the cell and in the executive review. When they trend in the right direction, you are building a real capability, not a demo.

Who benefits first, and why

Humanoids will not take over the whole factory at once. The earliest wins cluster where parts are light, variability is moderate, and space is tight. Think of server assembly, small form factor electronics, consumer devices, parcel handling, and late-stage kitting.

There will be niches where dedicated arms beat generalists on speed and cost. That is fine. The goal is not to replace everything. It is to make factories more software defined so units can change faster than the product roadmap. When the mix shifts, your skill registry and simulation assets let you pivot days, not months. As these deployments scale, remember that power and thermal budgets grow into strategic constraints. For the broader backdrop on energy as strategy, see why power becomes the moat.

Policy is production now

As robots turn from research to revenue, policy stops being an abstract debate and becomes an engineering input. Workers will want clarity on redeployment, training, and pay. Unions will have concrete questions about proximity and pace. Regulators and auditors will ask for traceability from incident to remedy. None of this is a reason to slow down. It is a reason to show your work.

The practical path is to turn policy questions into dashboards and documents you already maintain for quality. If a robot slowed near a human 1,200 times last week, who verified that the slowdowns were within the contact budget? If an elbow exceeded a force threshold, what corrective release shipped and how did you verify it? The more your answers look like software hygiene, the less your plant looks like a science experiment. Over time, the same kinds of evidence that make digital systems trustworthy will make physical AI legible.

The quiet arrival

The story here is not a single company, though Foxconn will make headlines as humanoids arrive in Houston. Nor is it one vendor, though NVIDIA’s stack has momentum. It is the normalization of physical AI as factory software. The interesting part is that there may be very little drama. The robots will clock in, fumble, improve, and settle into the rhythm of production. They will measure themselves not by clever quips but by cycle time.

We are stepping into a world where skills compile into motion, where alignment is about contact, where the best factories are cognitive shops with strong sim-to-real habits. If tokens were the breath of virtual AI, torque is the heartbeat of physical AI. The pulse just became audible on the floor. Now the work begins. Turn language into throughput, one task per hour at a time.