The Price of Thought Collapses: Reasoning Becomes a Dial

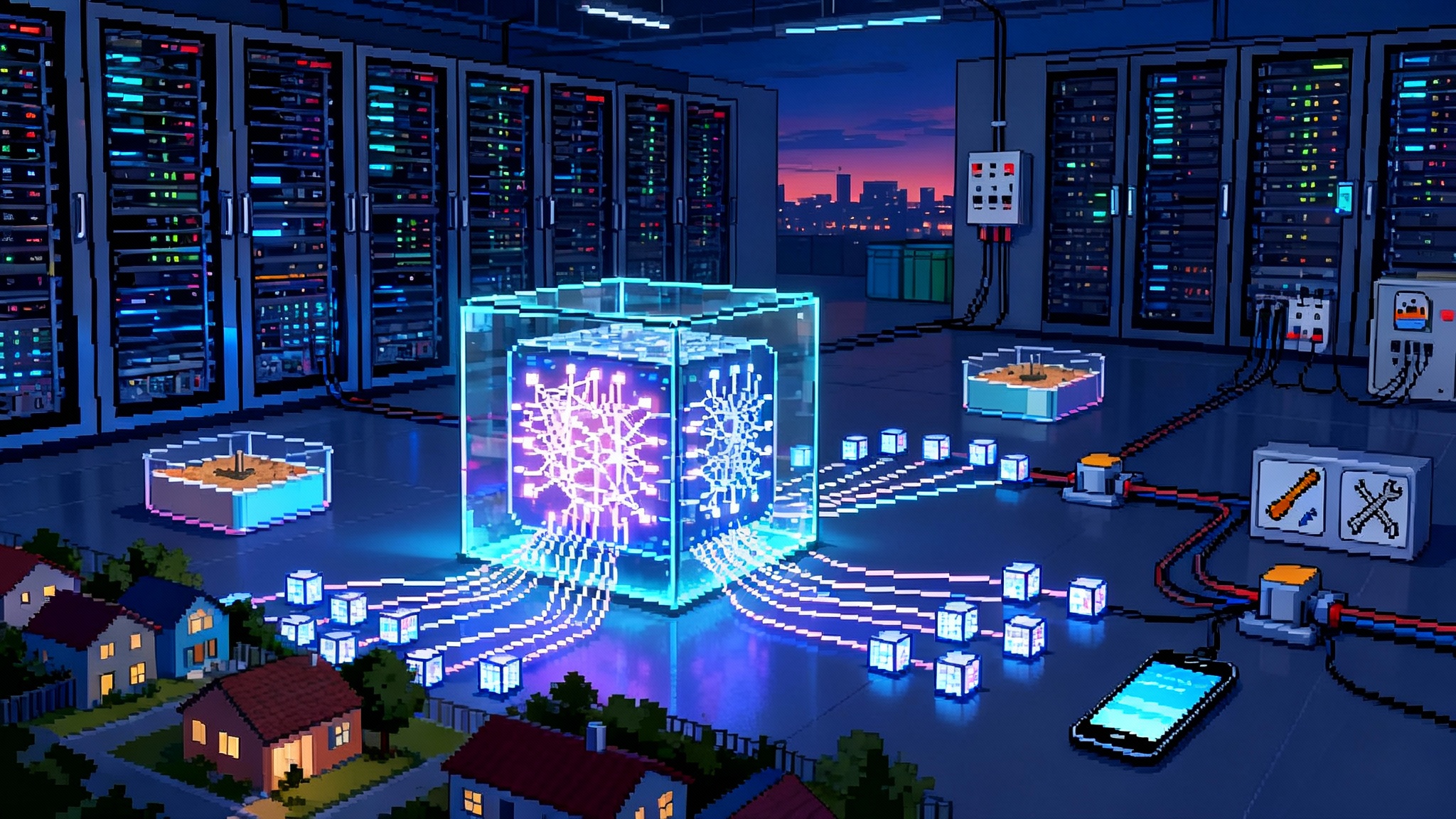

In 2025, users began selecting how hard their AI thinks. OpenAI turned test time compute into a visible control and DeepSeek pushed costs down. The result is a new primitive for apps and contracts: metered thought you can budget.

Breaking news: thinking just became a user setting

On January 31, 2025, OpenAI introduced a simple but radical idea in production systems. Developers and everyday users can pick how hard the model thinks before it replies. Three effort levels trade time and money for deeper reasoning. In April 2025, with the arrival of newer models and wider availability across products, the concept crystallized into a product primitive rather than a research trick. Choose effort, pay for thought, get depth.

Meanwhile, DeepSeek took a complementary path. It released an open reasoning model and emphasized cost. News coverage noted that DeepSeek positioned R1 as open and less expensive than Western rivals, fueling rapid adoption. See DeepSeek positioned R1 as open and cheaper for context.

Taken together, these moves made something subtle but profound visible to users. Test time compute is now a dial you can turn. The price of thought fell, and the act of thinking became metered.

From static answers to metered cognition

For years, a model’s intelligence felt fixed. You asked a question, got a single pass answer, and hoped it was good enough. Researchers already knew that giving a system more steps during inference improves results. Techniques like chain of thought, self consistency sampling, verifier calls, and tool use let a model think longer before committing.

In 2025, those techniques crossed the line from lab to interface. They turned into a slider or preset that anyone can touch. You dial effort up when the question matters, or down when speed and cost are the priority. That is a profound UI shift. The plumbing is technical and expensive, but the user control is simple and legible.

Think of it like water pressure. Most of the time medium is fine. When the job is tough, you turn the dial up and accept the extra flow and time. The bill reflects the choice. Responsibility moves from hidden heuristics to explicit policy and user intent.

What changed under the hood

Two design decisions converged.

-

Vendors trained models that benefit predictably from more test time compute. More scratchpad tokens, more candidate chains with voting, more tool calls, or a verifier pass produce a measurable lift on hard tasks.

-

Platforms exposed a clean abstraction that maps effort to latency and cost. Under the preset, the system allocates steps and tools according to policy. The user does not need to see scratchpads or raw chain of thought. They only need confidence that high effort takes longer, costs more, and more often gets difficult things right.

Open models like R1 showed how aggressive pricing and openness can make deep reasoning feel casual. Structured presets in closed systems made the cost and latency predictable enough to plan. The combination explains why metered cognition is spreading from coding assistants to research tools, customer support, procurement, and analytics.

The new primitive in product design

Once reasoning effort is a first class control, product teams design around it rather than bury it. Practical patterns include:

- Plain language presets. Quick, Standard, Deep. Tie each to clear expectations like 1 to 3 seconds, 3 to 10 seconds, and 10 to 60 seconds. Show a simple cost meter that rises with depth.

- Context aware upgrades. Let the model propose raising effort when it detects multi step math, retrieval across long context, or high consequence decisions. The user approves with one click.

- Progressive elaboration. Start cheap and escalate automatically when confidence is low or contradictions appear. Explain the escalation and give a receipt for the extra steps.

- Receipts for thought. Expose verifiable traces of what happened at high effort. Show tools invoked, checks performed, tests passed, and verifier outcomes. This is not free form chain of thought. It is structured evidence that makes quality auditable.

The effect is that reasoning becomes a design element. Your application does not just answer questions. It negotiates the right level of thinking for each situation.

For a deeper take on how trust gets attached to system evidence, see our discussion of attested AI becomes default. When proof attached to outputs becomes standard, effort presets plug directly into trust policies.

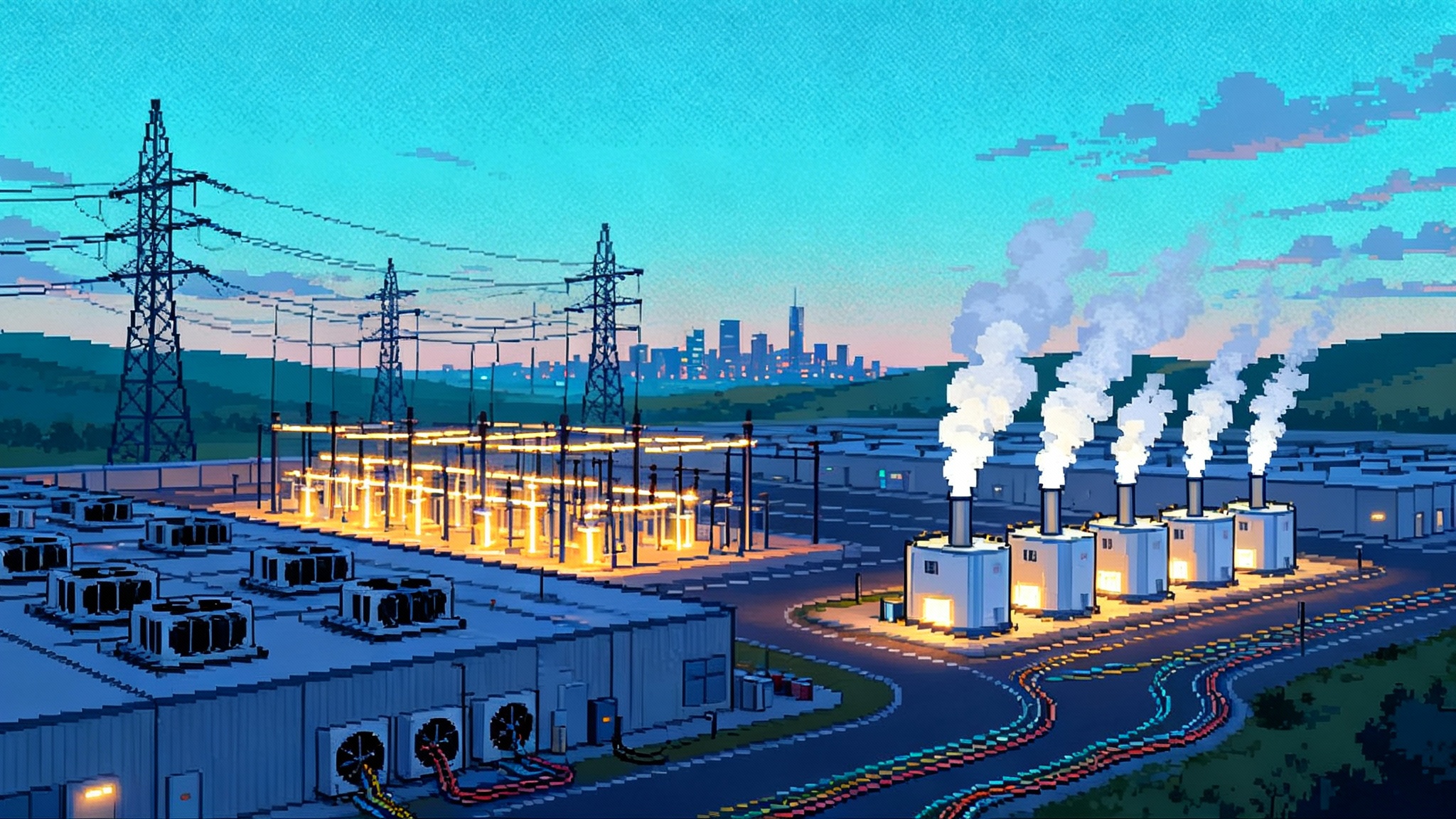

Operations gets a new budget line: thought minutes

If effort is a dial, someone pays to turn it. Enter thought minutes. Similar to compute credits, thought minutes quantify how much reasoning time or tool usage a workflow can consume.

Concrete controls look like this:

- Per request caps. No single operation can spend more than N seconds of model reasoning or more than X tool calls without explicit approval.

- Per session budgets. Each agent session draws from an allowance that refills daily or weekly. Policies can grant temporary overdraft for critical events.

- Priority queues. High consequence tasks borrow extra thought minutes from a shared pool. Low value requests wait or run at lower effort.

- Trace caching. When the same complex query repeats, reuse the validated steps and verifier results. Pay for verification, not for re discovery.

The surprise is how far cheap heuristics go in triage. A tiny classifier can route easy prompts to low effort and reserve heavy thinking for problems that truly need it. When hard tasks reappear, cached reasoning saves both time and money.

Contracts now specify reasoning SLAs

Service agreements used to focus on uptime and latency. In 2025, buyers started asking for reasoning depth guarantees. An enterprise contract might require that 95 percent of high effort requests include at least one self check, one retrieval pass, and one independent verifier call.

To make this testable, both sides need measurable artifacts:

- Inputs. Task classification that marks requests as low, standard, or high complexity with auditable criteria.

- Process. Logged evidence of steps performed, including tool calls, retrievals, verifications, and early exits.

- Outputs. Pass rates on domain specific benchmarks, like closed book fact checks for compliance, unit tests for code changes, or reconciliation checks for finance.

When the model fails to perform the agreed steps, the vendor owes credits or remediation, just like a missed latency target. Reasoning depth stops being a marketing adjective and becomes a contractual deliverable.

This aligns closely with a broader shift from leaderboard culture to continuous evaluation. For a market wide view, explore new AI market gatekeepers.

A market for distilled chains and verified traces

Once effort has a price, the byproducts of that effort become assets. Deep reasoning traces can be distilled into smaller models or compiled into reusable prompts and verifiers. A secondary market emerges where teams buy and sell verified patterns of thought, or the distilled models derived from them.

Three patterns are taking shape:

- Private trace vaults. Companies capture expensive reasoning trails and reuse them internally. Think of a repeatable playbook for incident response, procurement disputes, or complex account reconciliation.

- Domain distills. Vendors sell distilled models trained on verified traces for narrow jobs, such as clause triage for legal workflows or narrative generation for audits.

- Standardized verifiers. Third parties offer plug in verifiers for finance, medical coding, quality assurance, or safety. You bolt them into the high effort pipeline and pay per verification.

Strong controls are essential. Free form chain of thought can leak proprietary strategies or sensitive data. The safer approach is to keep raw traces private and publish structured, verifiable artifacts instead, such as test results, citations to retrieved evidence, and checksums of context.

This interacts with a larger governance question when models or weights circulate widely. For more on that, read once the weights are out.

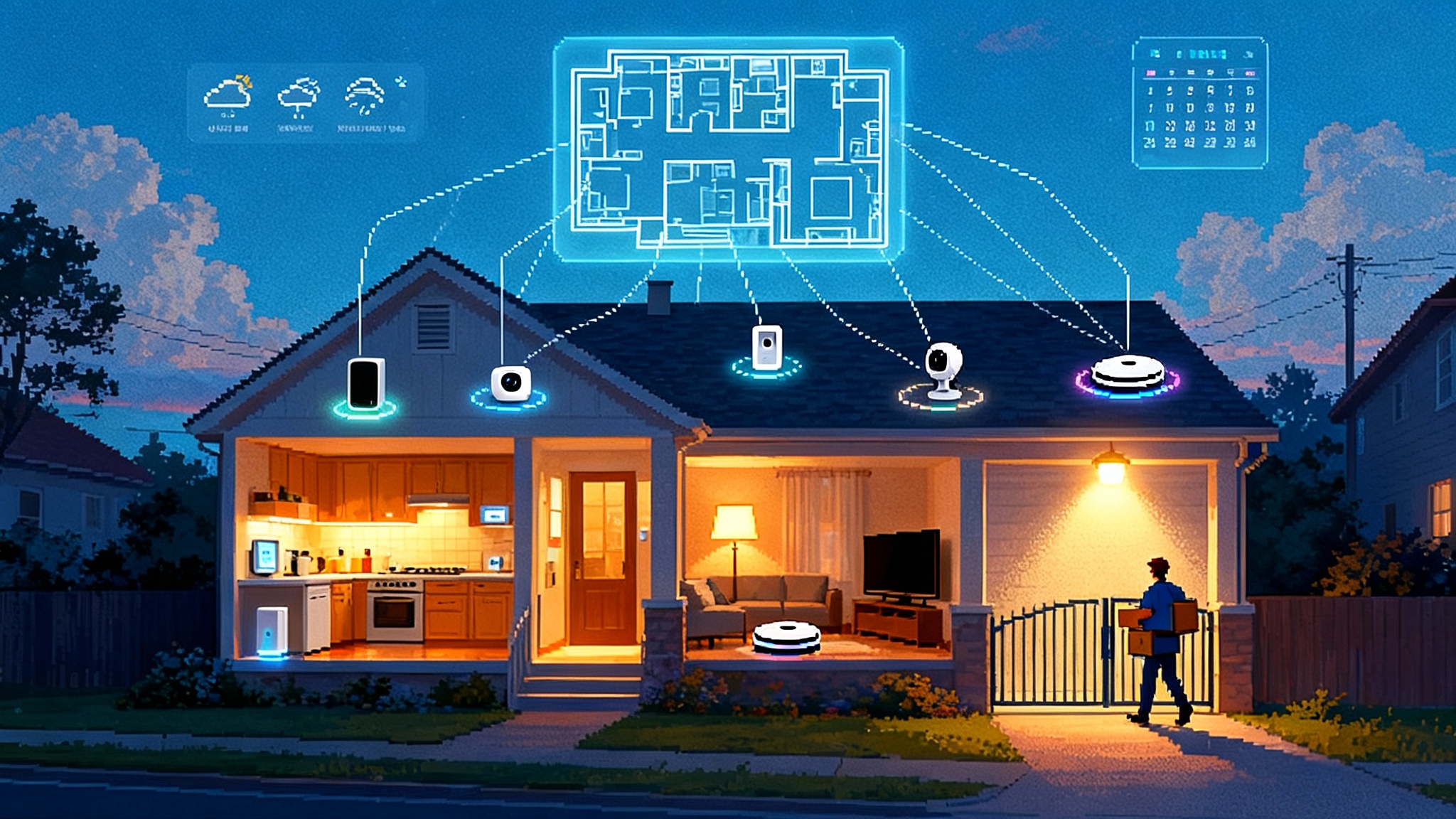

Who deserves more thought

A deeper social question sits behind the interface. When thinking time is metered, who decides how much thought a decision deserves. If a teacher can afford high effort for some students but not others, incentives bend. If a hospital covers one verifier pass for basic plans but three for premium, outcomes diverge. If retail investors use low effort assistants while funds pay for deep multi agent analysis, markets tilt.

Power asymmetries are not new, but effort dials make them explicit and programmable. Leaders should treat thinking time as a scarce public good, not only as a private upgrade. That suggests three commitments:

- Publish minimum thought policies for high consequence domains like claims decisions, health coding, financial risk, and public sector benefits. Document exceptions and review them.

- Audit allocation for fairness. Log effort levels by cohort and trigger alerts if certain groups receive systematically lower reasoning depth without justified risk or value criteria.

- Open the verifiers. Encourage transparent, auditable verifiers so independent parties can check deep reasoning pipelines.

A concrete playbook for teams in 2025

The dial changes how product, operations, procurement, and policy teams work together. Here is a practical checklist.

If you make products

-

Put effort in the interface. Add a simple control with three presets. Explain cost and latency in plain terms. Default to Standard but let the system propose upgrades for risky or ambiguous queries.

-

Show receipts. Display which checks ran at higher effort. Let experts drill down to verifier summaries, unit tests, retrieval stats, and cross checks.

-

Respect attention. Do not leave users idle while deep reasoning runs unless the decision requires it. Offer a preliminary answer with a promise to notify when the deep result is ready.

If you run operations

-

Budget thought minutes. Allocate by product and customer tier. Track spend by effort level and by tool category. Review heavy spenders weekly and prune expensive dead ends.

-

Route by hardness. Use a lightweight classifier to predict task difficulty and start at the lowest viable effort. Escalate when needed based on calibrated confidence.

-

Cache your thinking. Store validated traces and verifier outputs for common hard tasks. Share them across teams with guardrails and data minimization.

If you procure models or services

-

Ask for reasoning SLAs. Require evidence of steps performed at high effort, not just output quality. Insist on logs that your auditors can read.

-

Test on your tasks. Run an acceptance suite that measures improvements from low to high effort on real workloads. If higher effort does not move your needle, do not pay for it.

-

Demand cost transparency. Get clear per effort pricing, including tool calls, retrievals, and verifier runs. Watch for meter drift as prompts, plugins, and context windows change.

If you set policy or govern risk

-

Define minimum thought for consequential decisions. For example, claims denials, medical coding rejections, or credit reductions require verifier passes and traceable checks.

-

Safeguard against effort discrimination. Monitor effort allocation across protected attributes and customer segments. Investigate gaps and publish remediation steps.

-

Encourage open verifiers. Stimulate a market of independent verifiers with clear audit interfaces so regulated decisions remain checkable.

The engineering patterns that make the dial work

Effort is not only a slider. It is a policy that orchestrates a pipeline. Stable patterns are emerging:

- Early exit. Allow the system to stop reasoning once a verifier confirms adequacy. This caps spend while keeping quality high.

- Multi pass with voting. At high effort, sample multiple chains and use consensus to choose the final answer. Tune the number of samples to the budget and risk.

- Tool budgets. Give the planner a fixed allowance of tool calls per effort tier and require justification for each call. Inspect the scratchpad and logs.

- Confidence routing. When calibrated confidence is high at low effort, skip escalation. When it is low, escalate automatically and log the trigger reason.

- Retrieval contracts. Limit retrieval depth and source domains per tier. For example, only trusted corpora at low effort, then expand at higher effort with stronger verification.

- Energy awareness. Track power used by deep reasoning, especially in edge to cloud workflows. The dial influences not only money but also carbon budgets.

These patterns look boring on purpose. Once test time compute is a first class control, ad hoc prompting gives way to repeatable engineering. The dial becomes software, and the software becomes policy.

What to watch next

- Adaptive pricing. Instead of fixed tiers, expect per decision auctions. The model bids for compute based on estimated value at stake and predicted accuracy gains.

- Open reasoners and closed ecosystems. Open models encourage reuse and local control. Closed models integrate tool use, safety systems, and managed verifiers. Most teams will blend both depending on risk and compliance.

- Edge cognition. As device chips improve, expect low effort on device with cloud escalation for deep thought. This changes privacy envelopes, cost structures, and user expectations.

- Attestation everywhere. As supply chains mature, outputs increasingly carry proofs about how they were produced. The effort dial will integrate with attestation so that buyers can verify which steps actually ran.

The last point loops back to our earlier work on attested AI becomes default and how evidence attaches to results. When the UI for effort meets the infrastructure for proof, trust stops being a vibe and becomes a measurable property.

A final turn of the dial

We used to ask models for answers and hope they had thought enough. In 2025, we ask for a level of thinking and receive an answer priced and timed to match. That is not just a feature. It is a new contract between people and machines that lets us spend cognition like a currency.

The open questions are governance and equity. Who sets the default. Who approves the upgrade. Who gets the receipt. The best teams in the next year will not be the ones with the biggest model. They will be the ones who decide when to think, how much to think, and how to prove that the thinking was worth it. They will wire the dial into their product, their budget, their contracts, and their ethics, then treat thinking time as both a competitive lever and a public responsibility.