The Grid Is Gatekeeper: AI’s Next Bottleneck Is Power

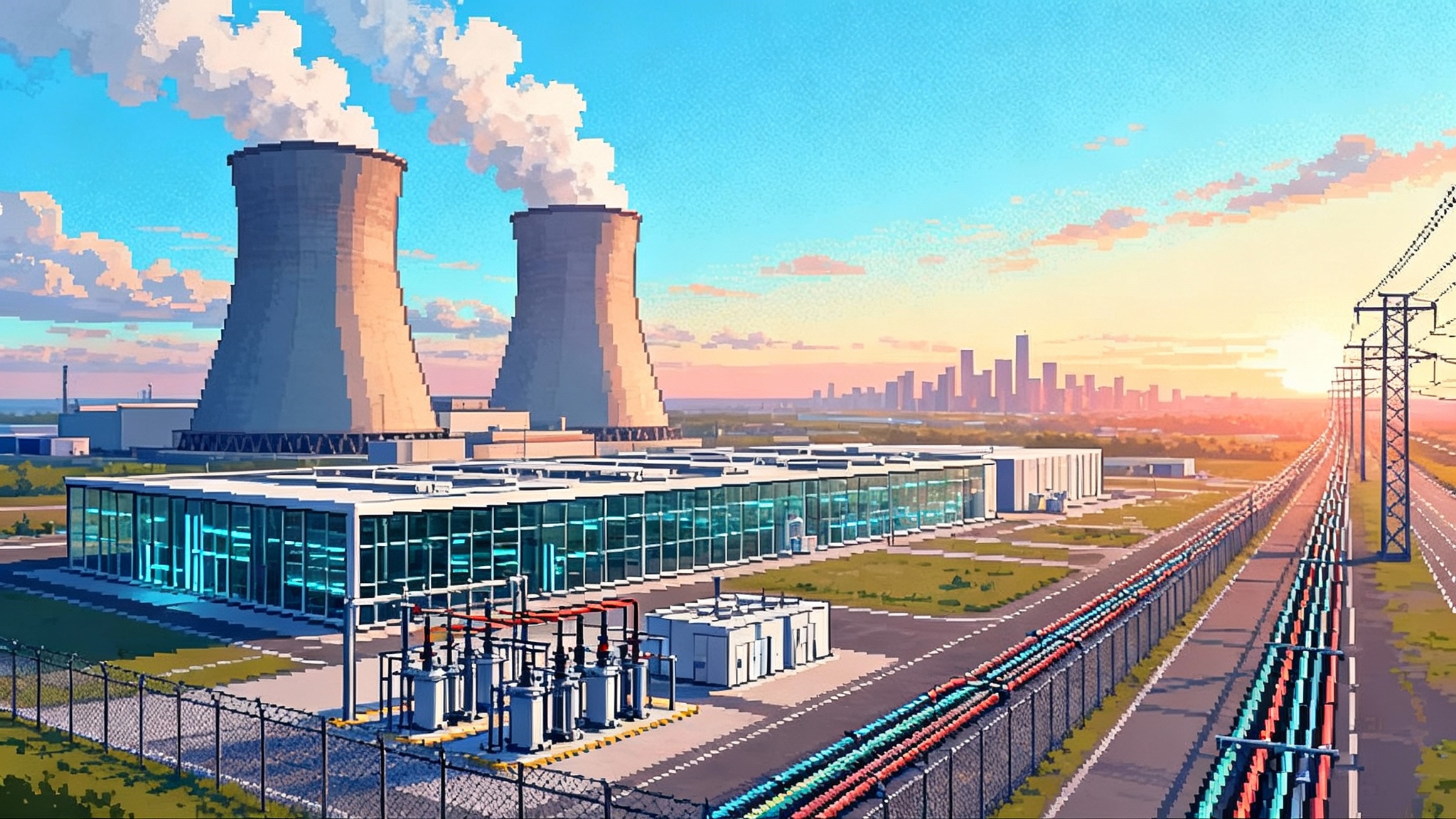

AI’s bottleneck has moved from model design to electricity. Hyperscalers chase nuclear-adjacent sites, regulators tighten behind-the-meter deals, and the grid increasingly decides where cognition can grow.

Breaking: the AI frontier has moved to the switchyard

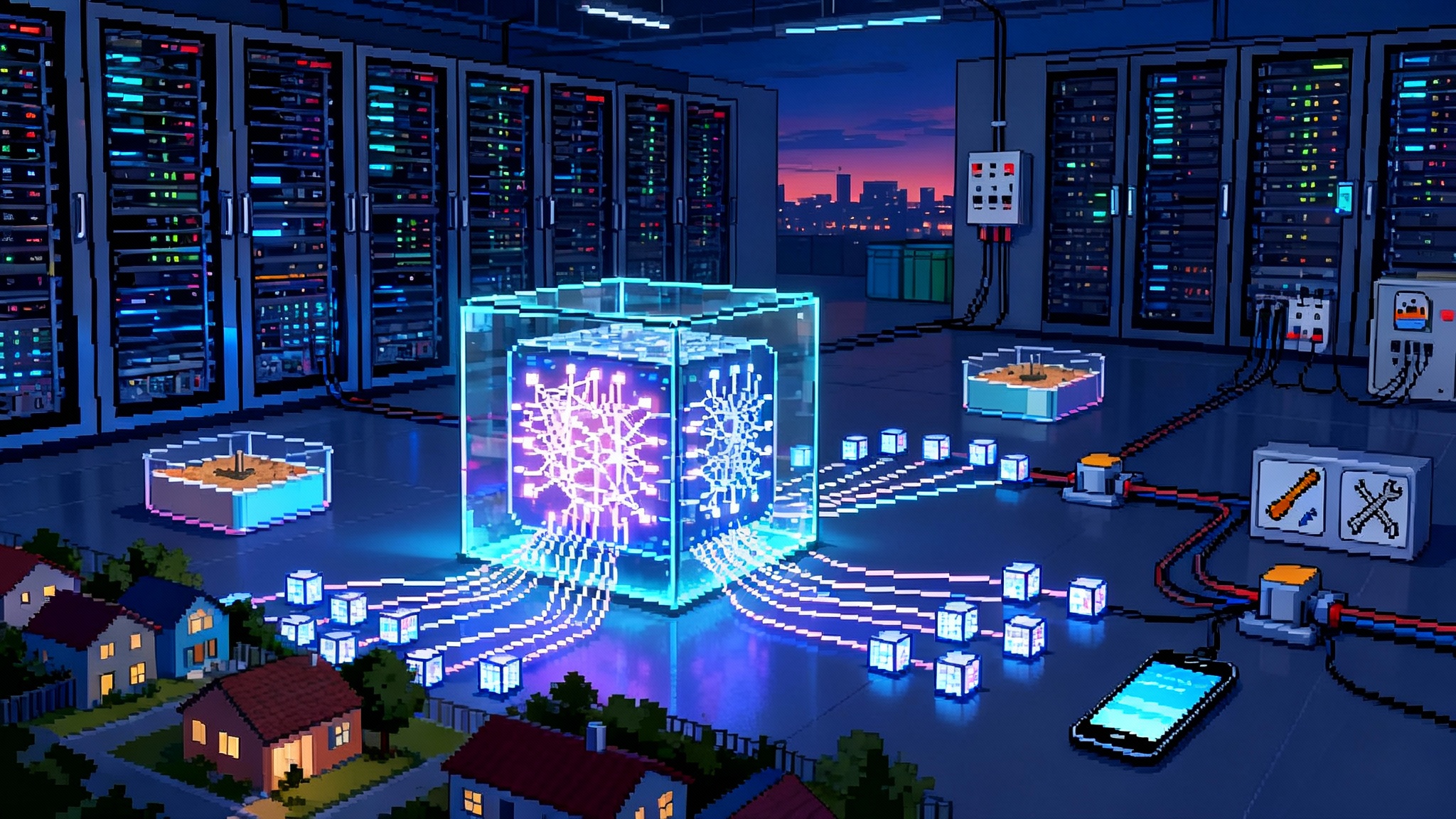

For years, the loudest news in artificial intelligence lived in model cards and benchmark charts. Today the breaking headlines land in utility dockets and transmission maps. In mid to late 2025, hyperscalers began committing to a new kind of colocation. Not just cages in the same building, but campuses that share a fence line with nuclear power plants. At the same time, planners revised upward the outlook for United States electricity demand and called out data centers as a prime driver. Regulators took notice and started scrutinizing behind the meter power deals that let private parties skirt retail tariffs and avoid open access rules. The center of gravity for AI shifted from parameters to electrons.

The new bottleneck is simple. Training and inference need electricity every millisecond. Latency budgets and reliability targets do not tolerate brownouts. Cooling systems cannot coast if compressors lose power. The grid has become the gatekeeper. If you want to scale cognition, you first need to scale capacity, firmed supply, and the wires that connect both to your racks.

Why intelligence is energy constrained

Every computation ultimately moves and flips physical things. Bits flip in transistors. Photons move in fiber. Heat must be removed. Thermodynamics is not a metaphor. It is the operating system under our software. There is a theoretical lower bound for the energy required to erase a bit. Modern chips are far above that floor, but the direction is set by physics. Running a trillion parameter model means driving a vast number of switching events, which means power in and heat out.

We can measure this truth with simple ratios. A data hall’s power usage effectiveness measures overhead, but even at very good values the dominant term remains the chips. Model design can help with sparsity, mixture of experts, quantization, and custom kernels. New accelerators can improve joules per token. Yet when scale doubles, the product of computation and time still grows fast. There is only so much efficiency to harvest before absolute megawatts matter more than marginal improvement. We explored this shift when the price of thought collapsing changed how teams plan workloads.

If intelligence is constrained by energy, then the competitive edge moves to whoever can secure 24 hours a day, low carbon power at predictable prices and can move that power across congested grids. That is why the action this year clustered around nuclear plants, firm geothermal, large hydro tie ins, and high voltage direct current corridors. Bits follow electrons.

The 2025 pivot: colocating with nuclear and revising the load curve

The most striking story of 2025 was the shift from renewable heavy virtual power purchase agreements toward concrete, physical proximity to firm supply. Data center operators announced or advanced sites that abut existing nuclear stations or planned advanced reactors. The logic is compelling. Nuclear offers very high capacity factors, zero onsite carbon emissions, and a stable cost structure once built. Sharing a grid interconnection and substation reduces lead time. Security perimeters overlap. Cooling water is nearby. A back lot becomes a campus edge.

In parallel, utilities and independent system operators raised near and medium term electricity demand forecasts. After a decade when load growth in the United States looked flat, planners now see a meaningful climb led by data centers, electrified heat, and industrial reshoring. This is not a blip. It is a structural turn. Utilities that planned for one or two percent growth are revising resource plans. Interconnection queues are being reopened with new assumptions about duty cycles and non coincident peaks. Transmission planners are looking at corridors that must carry hundreds of additional megawatts to new load pockets where land, fiber, and tax policy already pinned down.

Regulatory scrutiny also intensified. Behind the meter arrangements promise speed and price certainty for developers, but they also raise fairness questions. If a hyperscaler pays a plant directly over a private wire, who funds the shared network that everyone still relies on for reliability and reserves. States want to protect ratepayers. Grid operators want to maintain open access and avoid unintended reliability risks from uncoordinated private networks. Expect more dockets, more hearings, and more conditions placed on bespoke deals.

The strategy shift inside boardrooms

These headlines rewire how executives think about scale. For a decade the questions were model architecture and cluster design. Now two more questions rise to the top of the agenda.

- Where will the next reliable 500 megawatts come from.

- How do we get it to the racks within three years, not seven.

Siting has become a multidisciplinary sport. It is grid topology, county zoning, water rights, labor markets, and local politics. It is a risk register that starts in the switchyard and runs to the courthouse. Companies that can manage this complexity will own the future of compute. The smartest teams now treat siting, interconnection, and scheduling as a single portfolio problem rather than three independent tracks.

The thermodynamic floor meets political ceilings

Physics sets the floor. Politics sets the ceiling. You can plan a campus like a giant heat engine with power in, useful work out, waste heat captured for district usage, and water managed in a closed loop. None of it matters if permits fail, if a substation is delayed, or if a single county board meeting turns hostile.

Transmission is the quiet giant. You can build a low carbon plant next to your data center. You still need the regional grid to carry reserves, black start capability, and the diversity that only a network can provide. Yet interregional lines are the hardest projects to permit and finance because benefits cross borders while costs land on specific parcels. This is why high voltage direct current deserves attention. It delivers bulk power over long distances with lower losses and with converter stations that can be built near load. Rights of way can often be shared with highways or rail, easing the social footprint.

Behind the meter brings its own politics. These deals can deliver speed. They can also undermine the social contract if they look like special treatment. The path forward is transparency and cost sharing. If a private wire avoids some retail tariffs, then the campus should pay separately for reliability services, system planning, and shared upgrades that benefit the region.

An acceleration program for capacity and cognition

If you believe intelligence creation is now gated by electricity, the rational response is to build more capacity and to use it better. Here is a program that translates that idea into action.

1) Advanced nuclear at meaningful scale

- Uprate existing reactors where possible. Plant upgrades can add tens of megawatts at a fraction of the cost and time of new builds.

- Pursue life extensions for the current fleet. Every avoided retirement buys decades of firm, carbon free power.

- Demonstrate and then replicate advanced designs. Small modular reactors and advanced thermal or fast designs must prove cost and schedule at a few sites, then shift to cookie cutter replication with standardized modules and shared supply chains.

- Pair early units with hyperscaler campuses under long term offtake. The buyer brings capital, certainty, and load that matches round the clock output. The operator gets a bankable path to commissioning.

2) A national high voltage direct current spine

-

Identify three or four anchor corridors that stitch resource basins to load: Plains wind to Midwest data centers, Desert solar to Mountain compute, Hydro and nuclear east to coastal hubs.

-

Use performance based siting timelines. If developers meet environmental and community requirements within a fixed window, agencies must decide on schedule. Add a shared benefits fund for communities along the route.

-

Standardize converter station designs to cut years of engineering time and bring down costs through repetition.

3) Faster siting and permitting without lower standards

- Create one stop shops inside states with dedicated teams for data center energy projects. Bundle environmental review, grid interconnection, and water management into a single docket with one comprehensive record.

- Use enforceable schedules for interconnection studies. Queue reforms should prioritize projects that are site ready, have firm offtakers, and can demonstrate equipment procurement.

- Require fair share payments for reliability services from behind the meter campuses. Publish the methodology so other entrants can plan around it.

4) Demand aware schedulers

- Move from oblivious job submission to energy informed scheduling. Training runs can be staggered to align with periods of surplus. Inference can be steered to regions where nodal prices are low and clean energy is abundant.

- Expose energy cost and carbon intensity to developers with clear service level agreements. Make the energy price of a token a first class metric.

- Treat cooling as a dispatchable asset. Thermal storage and chilled water can shift load out of peak hours without sacrificing chip utilization.

- Add verifiability to scheduling so buyers and regulators can trust that jobs actually ran when and where the carbon and reliability budget said they should. This is a natural extension of how attested AI becomes default.

5) Energy frugal model and system design

- Design for sparsity first. Make mixture of experts, retrieval, and compression the default instead of chasing dense scale alone.

- Quantize more aggressively. Accept small losses in quality for large gains in power draw where the use case allows it.

- Use specialized silicon that hits the best joules per token for target workloads. General purpose performance is a luxury in a power constrained world.

- Reuse wherever possible. Fine tune small adapters on top of large base models instead of retraining giants from scratch. Cache intelligently across user sessions to avoid recomputation. These choices align with the economics outlined when the price of thought collapsing reshaped planning.

These moves are not optional extras. They are the only way the supply side and the demand side meet on a timeline that matches ambition.

New corporate playbooks

Hyperscalers will need to behave like utilities, and utilities will need to behave like platform companies.

For hyperscalers and model labs

- Build internal grid teams with utility veterans who can read power flow studies and negotiate interconnection agreements.

- Secure 24 hours a day, carbon free supply with real deliverability, not paper matching. Back it with onsite or near site firming where possible.

- Co invest in transmission that connects anchor campuses to regional diversity. Use long term contracts that survive executive turnover.

- Treat water as a first class constraint. Favor designs that recycle and capture heat for neighbors when climate and community needs align.

For utilities and grid operators

- Publish transparent hosting capacity maps for large loads and update them quarterly.

- Create tariff paths that allow large buyers to fund upgrades in exchange for predictable timelines and cost certainty.

- Integrate demand aware signals into default interconnection agreements so schedulers and system operators speak the same language.

- Measure and report reliability outcomes for large campuses to build trust with regulators and the public.

For policymakers

- Align incentives with reliability and carbon outcomes. Reward projects that bring firm, clean capacity and connect it to constrained load pockets.

- Protect the open access nature of the grid while allowing private investment to accelerate delivery. Do this with clear rules for cost allocation and reliability payments.

- Fund a small number of flagship high voltage direct current projects as national infrastructure. The private sector will follow when the template is proven.

The deeper question: who decides where cognition can live

When power becomes the scarcest input, capacity turns into a permit for thought. If a training run needs 300 megawatts for sixty days, that load crowds out something else unless we expand supply. Someone decides. It might be a utility planner who allocates interconnection upgrades. It might be a state commission that approves a special tariff. It might be a grid operator that curtails during a heat wave. It might be a county board that approves a water allocation. It might be a corporate procurement team that selects a site based on tax treatment and land prices.

In effect, we are drawing a map of where cognition is allowed to exist. It will cluster where firm, low carbon power is abundant, where transmission is strong, and where communities accept the trade. That map will shape which languages models learn well, which industries get the first drafts of new tools, and which regions build the talent flywheel around them. If we drift, the map will be drawn by accident and path dependence. If we plan, the map can align with national resilience, carbon goals, and shared prosperity. The governance stakes are high, which is why questions about who governs forkable cognition feel newly practical rather than abstract.

There is a second layer. As long as the grid can curtail or prioritize loads during stress, the system also has levers over when and how cognition operates. This is not a call for content control. It is a reminder that a society that allocates power also allocates the time budget of thinking machines. We must design these controls with care. Reliability rules should be transparent and technology neutral. Emergency actions should be rare and auditable. The default state should be open access where the next token is not subject to arbitrary veto, only to the same physical limits that govern every industrial load.

What to do this quarter

- If you run an AI program, quantify your power intensity per dollar of value created. Track joules per token and megawatt hours per model milestone. Put it on the same dashboard as latency and quality.

- If you site data centers, build a shortlist of nuclear adjacent parcels and locations near hydro or geothermal. Pair it with a transmission readiness score.

- If you design chips or models, set power budgets at the start. Treat a watt saved at the die as gold downstream in cooling and substations.

- If you regulate, open a docket to define fair share payments for behind the meter deals and publish a template that others can use.

- If you plan transmission, pick one corridor and move it from map to material. The second will be easier. The third will be cheaper.

The ending that is also a beginning

In 2025 the story of AI is not a story about clever prompts. It is a story about turbines, transformers, and trust. We are trying to teach machines to reason, then we ask the grid to keep time for them. The physics is unforgiving. The politics is unavoidable. The opportunity is real. If we build firm, clean capacity, unlock the wires that move it, speed up the permits that govern it, and design models that respect it, we will not just keep up with demand. We will direct it.

The grid has become the gatekeeper. That is not a dead end. It is a design brief. The winners will write their model weights with watts, their roadmaps in transmission line miles, and their ethics in the way they share capacity with the world around them. The rest will watch as their clusters sit underutilized, waiting for a substation that never comes.